Ariadne's Thread in the Cloud: AI-Driven Architecture Comprehension

In July 2019, an individual exploited a server-side request forgery (SSRF) vulnerability in a misconfigured web application firewall to access temporary IAM credentials on Capital One's cloud infrastructure. Those credentials, attached to an overly permissive IAM role, granted access to storage buckets containing personal data on approximately 100 million people in the United States and 6 million in Canada, primarily credit card applicants.[1]

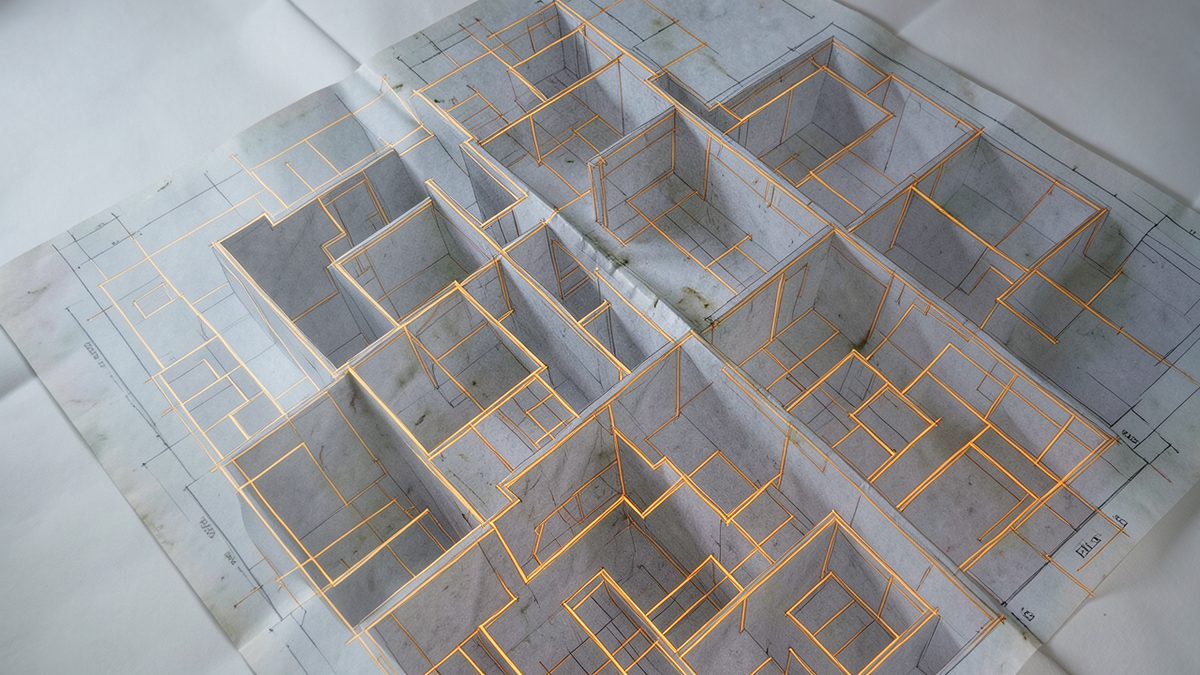

The attack didn't require sophisticated tooling. It required finding a path through the labyrinth that the defenders hadn't mapped. A misconfigured firewall connected to an overly permissive role connected to unprotected storage. Each component was individually comprehensible. The vulnerability lived in the interaction between them, in a corridor that nobody had traced end to end.

This is the defining challenge of cloud security and cloud architecture more broadly. The individual components are well-documented. The interactions between them, across hundreds of services, thousands of configurations, and tens of thousands of policies, form a labyrinth that exceeds what any single person can hold in their head.

The Cloud as Unplanned Labyrinth

In Greek mythology, the Labyrinth of Crete was designed by a single architect, Daedalus, to serve a single purpose: contain the Minotaur. It was complex by design. Cloud architectures are complex by accumulation. Nobody designs the whole maze. It grows organically as teams add services, projects add infrastructure, and compliance requirements add policies.

A single major cloud provider now offers over 200 fully featured services, according to its own documentation, with the actual count of distinct service offerings and features reaching into the hundreds.[2] Each service has its own configuration model, its own IAM integration, its own failure modes, and its own interactions with other services. An organization using even a fraction of these services creates a web of configurations that nobody designed as a whole.

IAM policies are a particularly dense region of this labyrinth. A moderately complex cloud environment can accumulate hundreds or thousands of IAM policies governing who and what can access which resources under which conditions. These policies interact in ways that are difficult to reason about: a permissive policy on one role can create an escalation path that bypasses restrictions elsewhere. The Capital One breach exploited exactly this kind of interaction, a path through the labyrinth that existed because of how policies composed, not because any single policy was obviously wrong.

The labyrinth grows in other dimensions too. Network configurations (security groups, NACLs, VPC peering, transit gateways) create a topology that determines which services can communicate with which others. Resource configurations (instance types, storage classes, encryption settings) determine how data is stored and processed. Cross-account trust relationships create corridors between what were supposed to be separate mazes. Each layer adds complexity that interacts with every other layer.

The Map Problem: Architecture Diagrams That Lie

Traditional approaches to understanding cloud architecture rely on diagrams: boxes and arrows showing services and their connections. These diagrams are useful when they're accurate. The problem is that they're almost never accurate for long.

Cloud infrastructure changes constantly. New services are deployed. Old services are modified. Configurations drift from their intended state. Temporary resources created for testing persist in production. In many organizations, the diagram drawn last quarter may not accurately reflect the infrastructure running today. The map diverges from the territory, and the territory changes with every deployment.

Infrastructure as Code (IaC) tools like Terraform and CloudFormation represent an attempt to make the labyrinth legible by defining infrastructure in version-controlled templates. When used consistently, IaC means the intended state of the infrastructure is documented in code. But IaC has its own gap: the intended state (what the template says) and the actual state (what's running in the cloud) can diverge through manual changes, failed deployments, or resources created outside the IaC pipeline. This divergence, sometimes called "infrastructure drift," creates corridors in the labyrinth that don't appear on any map.

AI as the Thread: Mapping What's Actually There

This is where AI-assisted cloud tooling is beginning to offer something genuinely new. Rather than relying on static diagrams or IaC templates that may be out of date, AI-powered tools can scan live infrastructure and generate current maps of what's actually deployed, how it's configured, and how the components interact.

Cloud Security Posture Management (CSPM) platforms represent one category of this tooling. These platforms continuously scan cloud environments for misconfigurations, compliance violations, and security risks, increasingly using AI to prioritize findings by actual exploitability rather than theoretical severity.[3] The approach parallels the reachability analysis discussed in the context of dependency graphs: not just "this configuration is non-standard" but "this configuration creates an exploitable path from the internet to your data."

Some CSPM tools now offer attack path analysis: tracing the chain of configurations that an attacker could exploit to move from an initial foothold to a high-value target. In the Capital One breach, the attack path was SSRF vulnerability → misconfigured WAF → overly permissive IAM role → unprotected S3 buckets. An AI tool capable of tracing this path before an attacker did would have identified the risk as a composite of individually unremarkable configurations. The Minotaur wasn't in any single corridor. It was in the path between them.

Cost as a Labyrinth Signal

Security isn't the only dimension where cloud complexity hides problems. Cost is another.

Industry estimates suggest that organizations waste roughly 20-30% of their cloud spending on idle resources, oversized instances, and unused commitments.[4] This waste is a labyrinth problem: the resources exist in the maze, consuming budget, but nobody has traced the path to determine whether they're still needed. A development environment spun up for a project that ended six months ago. An oversized database instance provisioned for a load spike that never recurred. Reserved capacity purchased for a service that was later decommissioned.

AI-powered cost optimization tools aim to trace these paths, identifying resources that are unused, underutilized, or redundant. The FinOps discipline, which applies financial accountability practices to cloud spending, increasingly relies on AI to make the cost labyrinth navigable.[5] The tools can surface patterns that are difficult for humans to detect at scale: for example, a cluster of instances that spike to full utilization briefly each hour and sit idle the rest of the time, a storage bucket that hasn't been accessed in months, or a load balancer routing traffic to a single instance that could be served directly.

Cost optimization is labyrinth simplification in financial terms. Every unused resource identified and removed is a corridor eliminated from the maze. Every right-sized instance is a corridor made narrower and easier to navigate. The thread here isn't just finding problems; it's finding corridors that can be demolished.

The Blast Radius Question

One of the most valuable applications of AI to cloud architecture is answering the question: if this component fails, what else fails?

In a complex cloud environment, dependencies between services are often implicit rather than explicit. Service A calls service B, which reads from database C, which replicates to database D in another region. A failure in database C doesn't just affect service B; it affects everything downstream of service A, and potentially everything that depends on the replication to database D. Tracing these dependency chains manually, across hundreds of services, is the kind of labyrinth navigation problem that exceeds human working memory.

AI-assisted architecture analysis tools aim to map these dependencies by analyzing network traffic patterns, API call logs, and infrastructure configurations. The goal is a dependency graph that shows not just what's connected to what, but what the blast radius of a failure in any given component would be. This is Ariadne's thread applied to resilience: trace the path from a potential failure point to every service it would affect, and you have better information about where to invest in redundancy, circuit breakers, and fallback mechanisms.

The Human-AI Partnership

AI-assisted cloud architecture tools are useful, but they don't eliminate the need for human judgment. AI can map the labyrinth, identify risks, and surface optimization opportunities. It can't decide which corridors to keep and which to demolish. Those decisions involve trade-offs between security, cost, performance, reliability, and organizational priorities that require human context.

The most effective pattern is a partnership: AI maps the labyrinth and highlights the corridors that deserve attention, and humans decide what to do about them. AI identifies the overly permissive IAM role; a human decides whether to restrict it or accept the risk. AI flags the unused resources; a human confirms they're genuinely unused before deleting them. AI traces the blast radius of a potential failure; a human decides whether the risk justifies the cost of mitigation.

The danger, as with all AI-assisted tooling, is over-reliance. An AI tool that reports "no critical findings" may simply have missed a path through the labyrinth that it wasn't trained to recognize. The Capital One breach exploited a combination of SSRF and IAM misconfiguration that many security tools of that era didn't model as a composite risk. The labyrinth always has corridors that the thread hasn't traced.

The cloud is a labyrinth that nobody designed as a whole. AI is becoming a thread that can trace paths through it at a scale and speed that human analysis can't match. But the goal shouldn't be to navigate the labyrinth more efficiently. It should be to make the labyrinth simpler: fewer services, fewer policies, fewer configurations, fewer corridors where Minotaurs can hide. Every resource removed, every policy simplified, every unnecessary service decommissioned is a permanent reduction in the maze's complexity. The best thread is the one that leads you to walls you can tear down.

References

[1] The Capital One breach (CVE not assigned; disclosed July 2019). See Dark Reading, "Capital One Breach Conviction Exposes Scale of Cloud Entitlement Risk," December 2023. https://www.darkreading.com/cloud/capital-one-breach-conviction-exposes-scale-of-cloud-entitlement-risk See also Krebs on Security, "Capital One Data Theft Impacts 106M People," July 2019. https://krebsonsecurity.com/2019/07/capital-one-data-theft-impacts-106m-people/

[2] AWS describes itself as offering "over 200 fully featured services." See aws-services.info for a community-maintained tracker that lists over 400 distinct service entries as of April 2026. https://www.aws-services.info/ The exact count depends on how services and sub-features are categorized.

[3] For an overview of CSPM capabilities and the evolution toward AI-assisted prioritization, see Reach Security, "Cloud Security Posture Management (CSPM): 2026 Guide." https://www.reach.security/blog/exploring-cspm-what-is-cloud-security-posture-management

[4] IDC estimates 20-30% of cloud spend is wasted annually. See IBM, "FinOps drives smarter cloud and AI decisions for government," 2025. https://www.ibm.com/think/insights/how-fin-ops-drives-smarter-cloud-and-ai-for-government Flexera's 2025 State of the Cloud report cites a similar figure of approximately 27%.

[5] The FinOps Foundation defines FinOps as "an evolving cloud financial management discipline and cultural practice." For the role of AI in FinOps, see CloudZero, "FinOps in the AI Era," 2025. https://www.cloudzero.com/blog/ai-costs-business-efficiency-report/