The Labyrinth and the Thread: Why We Build Systems We Can't Navigate

King Minos had a problem. The Minotaur, a creature born of divine punishment and human transgression, was too dangerous to let roam free and too shameful to leave visible. So Minos commissioned Daedalus, regarded in Greek tradition as the most skilled craftsman of his age, to build a structure that could contain it. Daedalus built the Labyrinth: a maze so intricate, so densely folded with false turns and dead ends, that anything entering it would never find its way out.[1]

The containment worked. The Minotaur was trapped. But the Labyrinth created a new problem that was, in some ways, harder than the original one. The structure was so complex that even Daedalus himself could barely navigate it. When Minos later confined Daedalus on Crete, the architect who designed every corridor found himself unable to leave the island by land or sea, trapped by the very king he'd served.[2] The tool built to solve a problem had become a problem of equal magnitude.

This is a pattern that anyone who builds software will recognize.

The Solution That Becomes the Problem

The Labyrinth wasn't poorly designed. It did exactly what it was supposed to do: contain something dangerous within a structure too complex to escape. The difficulty wasn't a flaw. It was the feature. But features have consequences. According to Plutarch, Athens agreed to send a tribute of seven youths and seven maidens to Crete at regular intervals, where they were sent into the Labyrinth. In most versions of the myth, none returned.[3] The maze that contained the monster also consumed everyone sent to deal with it.

Technology follows the same pattern with remarkable consistency. Microservices architectures are built to contain the problems of monolithic systems: tight coupling, slow deployments, team bottlenecks. They succeed. But the resulting architecture, with hundreds of services communicating across networks, introduces its own complexity: distributed tracing challenges, cascading failures, configuration sprawl, and operational overhead that can dwarf the original monolith's problems. The containment works. The containment is also the new danger.

Cloud infrastructure solves the problem of physical capacity planning. It also creates a labyrinth of IAM policies, security groups, cross-account trust relationships, and service configurations that no single person fully comprehends. Dependency management solves the problem of reinventing common functionality. It also creates graphs thousands of nodes deep, where a vulnerability in a transitive dependency five levels down can compromise the entire application.

Each architectural decision is a corridor. Each corridor solves a real problem. But the aggregate maze, the thing that emerges from thousands of individually reasonable decisions, exceeds the cognitive capacity of any single mind. The labyrinth isn't the result of bad engineering. It's the result of good engineering, repeated until the interactions between solutions become harder to manage than the original problems.

Theseus, Ariadne, and Two Different Problems

The myth's resolution is instructive. Theseus volunteers to enter the Labyrinth and kill the Minotaur. He's brave, strong, and capable. But bravery and strength are tools for the monster, not for the maze. Without help, Theseus would have killed the Minotaur and then died lost in the corridors, a hero whose victory was meaningless because he couldn't find his way back.

Ariadne, daughter of Minos, gives Theseus a ball of thread. He unwinds it as he goes, traces a path to the Minotaur, kills the creature, and follows the thread back out.[4] The thread doesn't help him fight. It helps him navigate. These are different problems requiring different tools, and the myth is precise about this: the sword is for the Minotaur, the thread is for the Labyrinth. Confusing the two is fatal.

This distinction maps directly onto how engineering teams handle complex systems. The "Minotaur" is the specific problem: the bug, the vulnerability, the performance bottleneck, the outage. Engineers are generally good at killing Minotaurs. Given a well-defined problem, they can solve it. The harder challenge is the labyrinth surrounding the problem: finding the right service in a mesh of hundreds, tracing a request through layers of abstraction, understanding which configuration change three months ago introduced the regression, navigating the dependency graph to locate the vulnerable library.

The navigation problem often takes longer than the fix. A senior engineer might spend hours tracing the cause of an outage and minutes deploying the patch. In many incidents, the Minotaur is straightforward. The Labyrinth is the real challenge.

Bounded Rationality and the Limits of Mental Maps

Herbert Simon, the political scientist and cognitive researcher who received the Nobel Memorial Prize in Economic Sciences in 1978 for his work on decision-making within organizations, introduced the concept of "bounded rationality" in the 1950s.[5] Simon's argument was that humans generally don't optimize, because we can't. Our working memory, our attention, our ability to hold complex systems in our heads, all of these are finite. Instead of finding the best solution, we tend to find one that's good enough given our cognitive constraints. Simon called this "satisficing."

Bounded rationality explains why labyrinths become unnavigable. Each corridor is comprehensible on its own. An engineer can understand a single service, a single configuration file, a single dependency chain. But the interactions between hundreds of services, thousands of configurations, and large codebases exceed what any individual can hold in working memory simultaneously. The labyrinth is finite, but it's larger than the mind trying to navigate it.

This is where institutional knowledge becomes critical, and fragile. Senior engineers carry mental maps of the labyrinth. They know that service A talks to service B through an undocumented queue. They know that the configuration in file X overrides the configuration in file Y, but only in production. They know that the dependency on library Z was pinned to an old version because the new version breaks a specific edge case in the billing pipeline. These maps are invaluable. They're also stored in human memory, which means they leave when the person leaves. The labyrinth remains. The map evaporates.

Borges and the Labyrinth as Condition

Jorge Luis Borges, the Argentine writer who returned to the labyrinth obsessively throughout his work, understood something about mazes that engineers often miss. In stories like "The Garden of Forking Paths" and "The Library of Babel," Borges treats the labyrinth not as a problem to be solved but as a condition to be inhabited.[6] The Library of Babel contains every possible book, every possible combination of characters. It therefore contains every truth and every falsehood, every meaningful sentence and every meaningless one. The library is complete, and it is useless, because finding anything meaningful within it is effectively impossible.

Borges's insight is that complexity doesn't require infinity to become unnavigable. A finite structure with enough internal connections can be as disorienting as an infinite one. A microservices architecture with 300 services isn't infinite. A cloud account with 2,000 IAM policies isn't infinite. But the combinatorial interactions between those components create a space that might as well be, from the perspective of a human trying to reason about it.

This is related to the distinction between complicated and complex that Dave Snowden's Cynefin framework draws.[7] In Snowden's model, a complicated system (a jet engine, a tax return) has many parts, but the relationships between them are generally knowable and predictable. With sufficient expertise, the system can be analyzed and understood. A complex system (an ecosystem, a large-scale distributed architecture) has parts whose interactions can produce emergent behavior that may not be predictable from the parts alone. Understanding the whole thing becomes significantly harder, because the system's behavior is more than the sum of its components.

Many modern software systems arguably fall closer to the complex end of this spectrum than the merely complicated. The labyrinth isn't just big. It's emergent. The corridors often interact in ways that nobody designed and nobody fully predicts.

AI as Ariadne's Thread

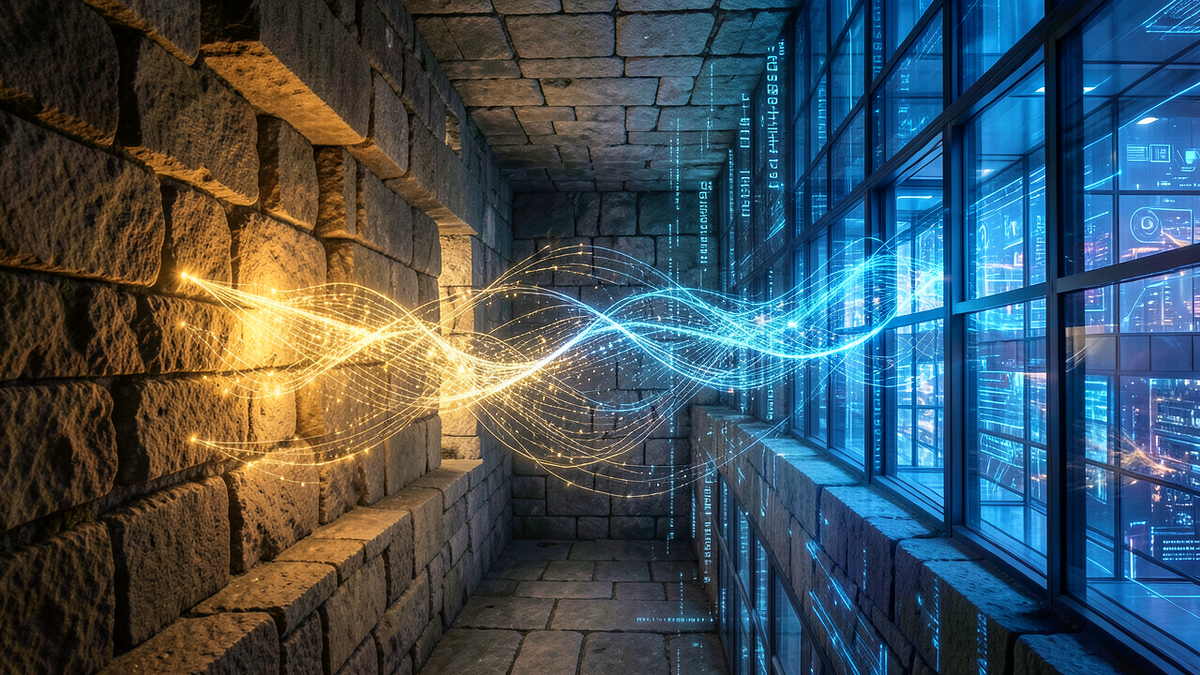

This is where the myth's most famous element becomes most relevant. Ariadne's thread is a navigation tool that works regardless of the labyrinth's complexity. It doesn't require understanding the maze's structure. It doesn't require a map. It simply traces the path you've taken, so you can retrace it. It's a tool for bounded rationality: it compensates for the limits of human memory and spatial reasoning.

AI is emerging as a new kind of thread for technical labyrinths. Large language models can process and summarize codebases too large for any individual to hold in memory.[8] AI-assisted observability tools aim to correlate signals across hundreds of services to suggest probable root causes that might take human operators significantly longer to trace.[9] AI-powered security scanners can traverse dependency graphs thousands of nodes deep to assess whether a vulnerability is actually reachable from the application's code. AI-driven architecture analysis tools are beginning to map cloud infrastructure and flag misconfigurations, unused resources, and security risks that hide in the interactions between components.

The thread doesn't kill the Minotaur. It tells you where the Minotaur is. It traces the path through the labyrinth so you can reach the problem, solve it, and find your way back. That's a potentially significant capability. For most of software engineering's history, the navigation problem has been addressed primarily through human memory, institutional knowledge, and tribal expertise. AI offers something different: a tool that doesn't retire, doesn't forget, and can hold more of the labyrinth in context than any individual human mind, though with its own limitations in accuracy and reliability.

But the myth carries a warning alongside the promise. Ariadne's thread works because the labyrinth is fixed. The corridors don't move. In software, the labyrinth changes constantly: new deployments, new configurations, new dependencies, new services. A thread that mapped the maze yesterday may lead you into a wall today. And there's a subtler danger: if the thread makes the labyrinth survivable, you may never simplify it. The tool that helps you navigate complexity can also enable you to tolerate more of it than you should.

Daedalus, confined on Crete by the king he'd served, didn't solve the labyrinth by navigating it better. According to Ovid, he escaped by building wings of feathers and wax and flying over the sea entirely.[2] Sometimes the right response to a labyrinth isn't a better thread. It's fewer corridors.

References

[1] Ovid, Metamorphoses, Book VIII, lines 152–168. Translation by A.S. Kline, 2000. https://ovid.lib.virginia.edu/trans/Metamorph8.htm

[2] Ovid, Metamorphoses, Book VIII, lines 183–235. Daedalus's imprisonment and escape via constructed wings.

[3] Plutarch, Life of Theseus, Sections 15–19. Translation by John Dryden, revised by Arthur Hugh Clough. https://classics.mit.edu/Plutarch/theseus.html

[4] Plutarch, Life of Theseus, Section 19. Ariadne's thread and Theseus's navigation of the Labyrinth.

[5] Herbert A. Simon, Models of Man: Social and Rational, John Wiley & Sons, 1957. See also Herbert A. Simon, "Rational Choice and the Structure of the Environment," Psychological Review, Vol. 63, No. 2, 1956, pp. 129–138.

[6] Jorge Luis Borges, "The Garden of Forking Paths" (1941) and "The Library of Babel" (1941), collected in Ficciones, Grove Press, 1962.

[7] Dave Snowden and Mary E. Boone, "A Leader's Framework for Decision Making," Harvard Business Review, November 2007. https://hbr.org/2007/11/a-leaders-framework-for-decision-making

[8] For a survey of LLM capabilities and limitations in code comprehension, see "Large Language Models (LLMs) for Source Code Analysis," arXiv, March 2025. https://arxiv.org/html/2503.17502 See also "Towards an understanding of large language models in software engineering tasks," Empirical Software Engineering, Vol. 30, 2025. https://link.springer.com/article/10.1007/s10664-024-10602-0

[9] For a comprehensive survey of AI-assisted root cause analysis and AIOps, see "A Survey of AIOps for Failure Management in the Era of Large Language Models," arXiv, June 2024. https://arxiv.org/abs/2406.11213 See also "Exploring LLM-based Agents for Root Cause Analysis," arXiv, March 2024. https://arxiv.org/html/2403.04123v1