The Prisoner's Dilemma: When Rational Choices Lead to Collective Failure

In 1950, mathematicians Merrill Flood and Melvin Dresher at RAND Corporation created a game that would become one of the most studied problems in social science. Two prisoners, separated and unable to communicate, each face a choice: betray the other or stay silent. If both stay silent, they each serve one year. If both betray, they each serve two years. But if one betrays while the other stays silent, the betrayer goes free while the silent one serves three years.

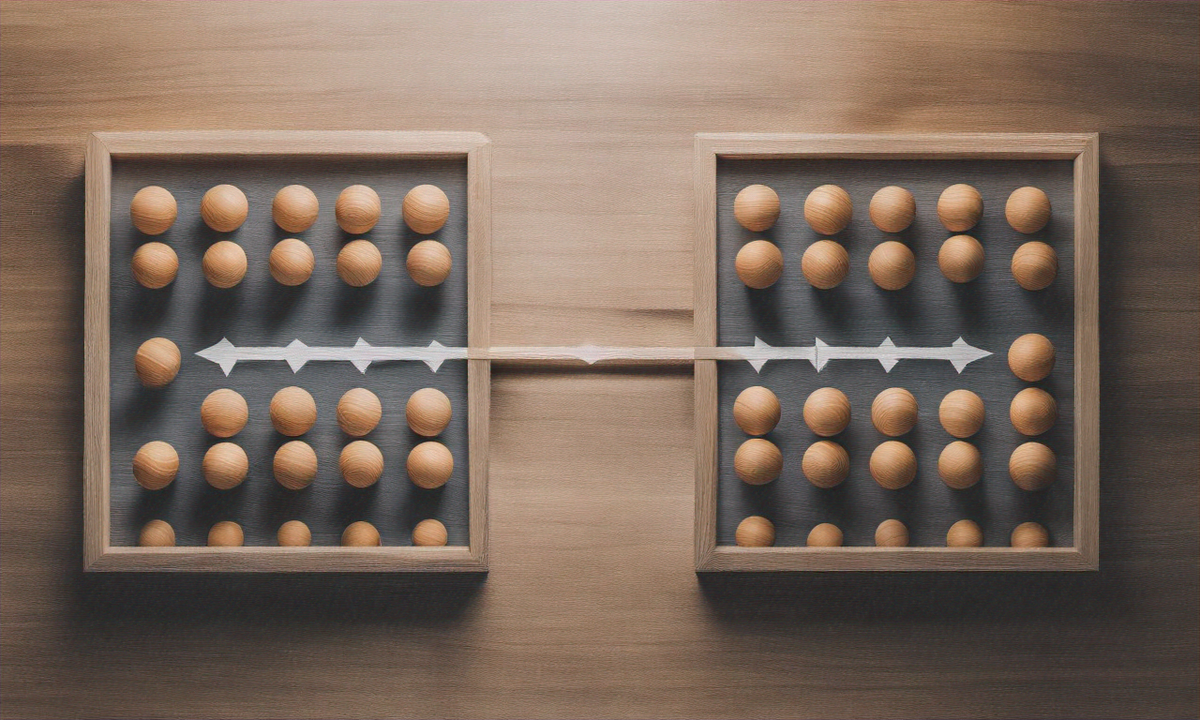

The rational choice—the one that maximizes your individual outcome regardless of what the other person does—is to betray. But when both prisoners follow this logic, they both serve two years. If they had cooperated and stayed silent, they'd each serve only one year. Individual rationality leads to collective irrationality.

Albert Tucker formalized this as the prisoner's dilemma in 1950, and it's been haunting economists, philosophers, and game theorists ever since. Not because it's a clever puzzle, but because it describes something fundamental about human cooperation—and because technology has turned it from a thought experiment into the architecture of our digital lives.

The Mathematics of Betrayal

The prisoner's dilemma isn't just a story—it's a mathematical structure called a game, with players, strategies, and payoffs. The key insight is the difference between two types of outcomes: the Nash equilibrium and the Pareto optimum.

A Nash equilibrium is a state where no player can improve their outcome by changing strategy alone. In the prisoner's dilemma, mutual betrayal is a Nash equilibrium. If you're betraying and I switch to silence, I get the worst outcome (three years). If I'm betraying and you switch to silence, you get the worst outcome. Neither of us can improve by changing alone, so we're stuck betraying each other.

A Pareto optimum is a state where you can't make anyone better off without making someone worse off. Mutual cooperation—both staying silent—is Pareto optimal. We're both better off than in mutual betrayal, and there's no way to improve one person's outcome without hurting the other.

The tragedy is that the Nash equilibrium and Pareto optimum don't align. Rational individual choice leads us to an outcome that's worse for everyone. This isn't a failure of logic—it's a structural feature of the game. The incentives are misaligned.

When the Game Repeats

The prisoner's dilemma becomes more interesting—and more relevant to technology—when it's iterated. Instead of playing once, you play repeatedly with the same partner. Now your choice in one round affects future rounds. Betrayal might win today, but it invites retaliation tomorrow.

In 1980, political scientist Robert Axelrod ran a tournament where computer programs played iterated prisoner's dilemma against each other. The winner wasn't the most sophisticated strategy or the most aggressive. It was Tit-for-Tat: cooperate on the first move, then do whatever your opponent did last time. Cooperate if they cooperated, betray if they betrayed.

Tit-for-Tat succeeded because it was nice (never betrayed first), retaliatory (punished betrayal immediately), forgiving (returned to cooperation after one round of retaliation), and clear (opponents could easily predict its behavior). It showed that cooperation can emerge from self-interest when interactions repeat and reputation matters.

But Tit-for-Tat requires three things: repeated interactions, memory of past behavior, and the ability to identify and punish defectors. Technology often breaks all three.

Why Tech Amplifies the Dilemma

The prisoner's dilemma has always existed in human society. But technology transforms it in three critical ways: scale, speed, and structure.

Scale multiplies the stakes. When two people face a prisoner's dilemma, the cost of mutual defection is limited. When millions of people face it simultaneously—sharing data, choosing apps, accepting terms of service—individual rational choices create systemic harms. Privacy erosion, attention manipulation, and information pollution aren't caused by a few bad actors. They're caused by millions of people making individually rational choices that collectively make everyone worse off.

Speed prevents coordination. The prisoner's dilemma assumes players can't communicate before choosing. Technology enforces this. You accept terms of service instantly, without coordinating with other users. You share data without knowing what others are doing. You choose apps based on network effects—where everyone else already is—without the ability to collectively switch to better alternatives. The speed of digital decisions prevents the deliberation that might enable cooperation.

Structure creates winner-take-all dynamics. Network effects mean the value of a platform increases with the number of users. This creates a coordination problem: everyone would be better off on a privacy-respecting platform, but no one wants to be the first to switch and lose access to the network. The first-mover disadvantage locks everyone into collectively suboptimal choices. Facebook isn't dominant because it's the best social network—it's dominant because everyone else is already there.

Technology doesn't just present prisoner's dilemmas—it manufactures them at scale, enforces them at speed, and structures markets to make cooperation nearly impossible.

The Tragedy of Rational Choice

The prisoner's dilemma reveals something uncomfortable about rationality. Being rational—maximizing your individual outcome—can make everyone worse off. This isn't a bug in human reasoning. It's a feature of situations where individual and collective interests diverge.

Garrett Hardin called this the "tragedy of the commons" in 1968. When a resource is shared but decisions are individual, rational actors will overuse it until it collapses. Each farmer benefits from adding one more cow to the common pasture, but when all farmers follow this logic, the pasture is destroyed. Each person benefits from driving instead of taking public transit, but when everyone drives, traffic becomes unbearable. Each company benefits from polluting, but when all companies pollute, the environment degrades.

The prisoner's dilemma is the mathematical structure underlying these tragedies. Individual rationality leads to collective irrationality. Self-interest undermines shared interests. Optimization at the individual level creates dysfunction at the system level.

And technology has turned the commons digital. Data privacy is a commons—everyone benefits when everyone protects it, but each person benefits from sharing. Attention is a commons—everyone benefits from ethical design, but each app benefits from being more addictive. Truth is a commons—everyone benefits from accurate information, but each outlet benefits from viral sensationalism.

What Makes Cooperation Possible

If the prisoner's dilemma is so fundamental, how does cooperation ever emerge? Game theory offers several answers, and they're all relevant to technology.

Repeated interactions build trust. When you know you'll face the same person again, betrayal today invites retaliation tomorrow. This is why Tit-for-Tat works in iterated games. But technology often creates one-shot interactions—you'll never see this Uber driver again, never interact with this Twitter user again, never need this app's permission again. When interactions don't repeat, cooperation collapses.

Communication enables coordination. If prisoners could talk before choosing, they might agree to stay silent. But the dilemma assumes they can't communicate. Technology often enforces this isolation. Terms of service are take-it-or-leave-it. Platform rules are set unilaterally. Users can't coordinate to demand better alternatives because platforms control the channels of communication.

Punishment deters defection. If betrayal can be detected and punished, cooperation becomes rational. But technology makes defection invisible. You don't know which companies are selling your data. You don't know which apps are manipulating your attention. You don't know which platforms are amplifying misinformation. Without visibility, there's no accountability. Without accountability, there's no deterrence.

Changing the payoff structure aligns incentives. If cooperation becomes more rewarding or defection becomes more costly, the Nash equilibrium shifts. This is what regulation does—it changes the payoffs. GDPR makes privacy violations costly. Antitrust enforcement makes monopolistic behavior risky. Labor protections make worker exploitation expensive. When individual incentives align with collective welfare, cooperation emerges naturally.

The Series Ahead

Over the next six days, we'll explore how the prisoner's dilemma manifests in specific domains of technology. Each case reveals the same pattern: individual rational choices creating collectively irrational outcomes. But each also reveals different mechanisms, different stakes, and different possibilities for escape.

We'll examine privacy, where individual data sharing creates collective surveillance. Attention, where apps compete to be more addictive. The gig economy, where workers compete against each other while platforms capture value. Open source, where everyone benefits from contributing but each actor benefits more from free-riding. Misinformation, where truth-telling loses to viral sensationalism. And finally, solutions—how we might build technology that enables cooperation instead of enforcing competition.

The prisoner's dilemma isn't just a game. It's a lens for understanding why technology so often makes us collectively worse off even as we each make individually rational choices. And understanding the structure of the problem is the first step toward changing it.

The question isn't whether we're in a prisoner's dilemma. We are, thousands of times per day, in every app we use and every platform we join. The question is whether we'll recognize it, and whether we'll build systems that make cooperation the rational choice instead of the impossible one.