Your Random Isn't Random: Pseudorandomness, Entropy, and Why Cryptography Depends on a Philosophical Problem

In 2008, a security researcher named Luciano Bello noticed something strange about Debian Linux's implementation of OpenSSL. Two years earlier, a Debian maintainer had removed two lines of code that appeared to be using uninitialized memory, a common source of bugs. The fix looked reasonable. Static analysis tools had flagged the lines. The maintainer was being responsible.

The problem was that those two lines were the primary source of entropy for OpenSSL's random number generator. By removing them, the maintainer had reduced the entire seed space to roughly 32,768 possible values, the range of process IDs on a Linux system. Every SSL certificate, every SSH key, every piece of cryptographic material generated on Debian or Ubuntu systems over those two years was drawn from a pool so small that an attacker could try every possibility in minutes.[1]

Hundreds of thousands of keys were compromised. Not because the cryptographic algorithms were weak, not because the math was wrong, but because the "randomness" wasn't random.

The Determinism Problem

Computers are, at their core, deterministic machines. Given the same input, a processor will always produce the same output. This is a feature, not a bug. Reproducibility is what makes software reliable, testable, and debuggable. But it creates a fundamental tension: how does a deterministic machine produce something unpredictable?

The short answer is that it can't. Not really. What it can do is produce sequences of numbers that look random to an observer who doesn't know how they were generated. These are pseudorandom number generators, or PRNGs, and they're the workhorses behind nearly every application that uses "randomness" in software.

A PRNG takes a starting value, called a seed, and applies a mathematical function to it repeatedly. Each application produces the next number in the sequence. The function is designed so that the output appears statistically random: uniformly distributed, no obvious patterns, passing standard tests for randomness. But the sequence is entirely determined by the seed. Same seed, same sequence, every time.

This is Laplace's Demon in miniature. The PRNG is a tiny deterministic universe. If you know its initial state, you know its entire future. The "randomness" is epistemic, not ontological. It exists in the observer's ignorance, not in the mechanism itself.

For many applications, this is perfectly fine. Shuffling a playlist, assigning colors in a data visualization, distributing simulated particles in a game engine: these need statistical uniformity, not genuine unpredictability. The Mersenne Twister, one of the most widely used PRNGs, produces sequences with excellent statistical properties and a period of 219937−1. It's also completely predictable. Given 624 consecutive outputs, you can reconstruct the internal state and predict every future value.[2]

For a game, that's irrelevant. For cryptography, it's catastrophic.

The Entropy Question

Cryptographic security depends on a simple premise: an attacker cannot predict or reproduce the random values used to generate keys, nonces, and session tokens. If they can, the encryption is theater. The lock is real, but the key is hanging on a nail beside the door.

Cryptographically secure pseudorandom number generators (CSPRNGs) address this by making the internal state computationally infeasible to reconstruct from the output. Even if you observe a long sequence of values, you can't work backward to the state that produced them, assuming the underlying mathematical problem (like factoring large numbers or computing discrete logarithms) remains hard.

But a CSPRNG is still deterministic. It still needs a seed. And the security of the entire system ultimately depends on the quality of that seed, which is where entropy comes in.

Entropy, in the information-theoretic sense, measures unpredictability. A coin flip has one bit of entropy. A fair die roll has about 2.58 bits. A value drawn from a source with zero entropy is completely predictable, no matter how complicated the algorithm that processes it.

Operating systems gather entropy from physical processes: the timing of keyboard presses, mouse movements, disk I/O interrupts, network packet arrival times, thermal noise from hardware sensors. These are physical events whose precise timing is influenced by enough variables that they're effectively unpredictable. Linux collects this entropy into a pool and makes it available through /dev/random and /dev/urandom.[3]

The philosophical question lurking here is whether these sources provide genuine randomness or just very good epistemic randomness. Is the timing of a keystroke truly unpredictable, or is it determined by the physics of your nervous system, the electrical properties of the keyboard, and the state of the USB bus? In principle, Laplace's Demon could predict it. In practice, nobody can, and that practical unpredictability is what security depends on.

When Randomness Fails

The history of cryptographic failures is, to a surprising degree, a history of bad randomness. The algorithms themselves are usually sound. The math holds up. What breaks is the assumption that the random inputs were actually random.

In 2012, a team of researchers reported that after analyzing millions of RSA public keys collected from web servers, they found that roughly 0.2% shared a prime factor with another key. RSA security depends on the difficulty of factoring the product of two large primes. If two keys share a prime, computing the greatest common divisor reveals both private keys instantly. According to the researchers, the likely cause was embedded devices like routers and firewalls generating keys at boot time, before the system had collected enough entropy. The "random" primes, they argued, weren't random enough, and some devices ended up choosing the same ones.[4]

In 2010, a hacking group called fail0verflow presented findings at the Chaos Communication Congress claiming they had broken Sony's PlayStation 3 code-signing system. According to their presentation, the PS3's implementation of ECDSA (Elliptic Curve Digital Signature Algorithm) reused a random value that was supposed to be unique for every signature. The ECDSA algorithm requires a fresh random number k for each signature; if k is ever reused, the private key can theoretically be computed from two signatures using basic algebra. The group reported that Sony used the same k every time, allowing them to extract and publish the private key that was supposed to protect the entire PS3 software ecosystem.[5]

Then there's the case of Netscape's original SSL implementation. In 1995, two UC Berkeley graduate students, Ian Goldberg and David Wagner, reported that they had examined how Netscape generated the random numbers used to create SSL session keys. According to their findings, the browser's PRNG was seeded using just three values: the current time of day, the process ID, and the parent process ID. All three, they noted, were either predictable or easily guessable. They claimed that an attacker who knew roughly when a connection was established could try every plausible seed combination and crack the session key in under a minute. If accurate, the entire security model of the most popular browser on the internet had been reduced to a guessing game with a trivially small search space.[6] The cryptographic algorithms were fine. The math was sound. But the seed that bootstrapped everything was barely random at all.

These aren't edge cases. They're illustrations of a systemic vulnerability: cryptographic systems are only as strong as their randomness, and randomness is harder to get right than the cryptography itself.

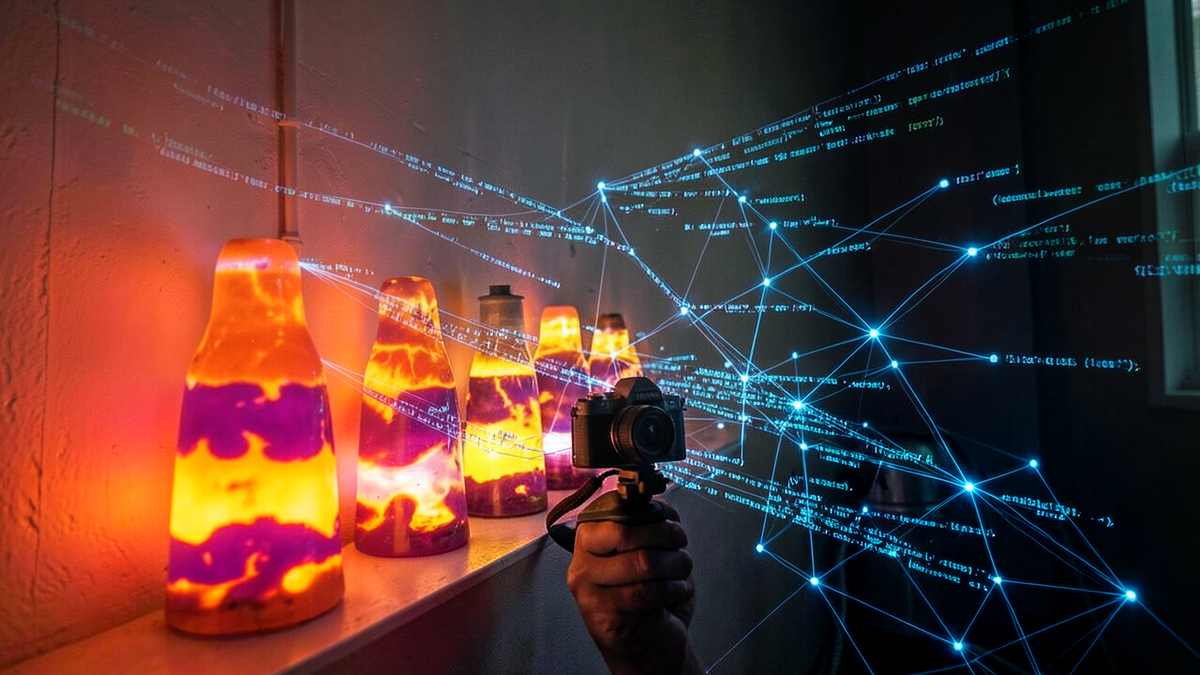

Lava Lamps and Quantum Dice

If software can't produce true randomness, where do you get it? One approach is to lean harder into physical entropy sources. Cloudflare, which handles a significant portion of internet traffic, famously uses a wall of lava lamps as one of its entropy sources. A camera photographs the lamps, and the chaotic, turbulent motion of the wax produces a stream of unpredictable pixel values that feed into the entropy pool.[7]

It's a striking image: the security of encrypted internet connections depending partly on the physics of heated paraffin wax. But the principle is sound. The motion of lava in a lamp is a chaotic system, sensitive to initial conditions in ways that make long-term prediction practically impossible.

Hardware random number generators (HRNGs) take a more direct approach. Intel's RDRAND instruction, available on modern x86 processors, samples thermal noise in the silicon to generate random bits. These are physical processes influenced by quantum effects, making them arguably sources of ontological randomness rather than merely epistemic randomness.

Dedicated quantum random number generators go further, deriving randomness from quantum mechanical processes like photon detection or vacuum fluctuations. These devices produce randomness that is, according to our best understanding of physics, genuinely irreducible. No amount of information about the system's prior state would allow prediction of the next bit.

The practical question is whether the distinction matters. If a chaotic system produces output that no attacker can predict, does it matter whether the unpredictability is "genuine" or merely computational? For cryptography, the answer is nuanced. Chaotic systems are deterministic; a sufficiently powerful adversary with enough information about initial conditions could, in theory, predict them. Quantum systems are not; no amount of information helps. In practice, the chaotic sources are good enough for most threat models. But as computing power grows and adversaries become more sophisticated, the gap between epistemic and ontological randomness may become practically relevant.

The Reproducibility Paradox

There's an irony at the heart of randomness in computing. Security needs unpredictability: the same input should never produce the same output. But science needs reproducibility: the same experiment should always produce the same result.

Monte Carlo simulations, statistical analyses, and machine learning experiments all depend on random numbers, but they also need to be reproducible. If you can't rerun an experiment and get the same result, you can't verify it. The solution is to use a PRNG with a known seed. The sequence looks random, behaves randomly for statistical purposes, but can be exactly reproduced by anyone who knows the seed.

This creates a clean separation between two uses of randomness that are philosophically distinct. Cryptographic randomness needs to be unpredictable to everyone. Scientific randomness needs to be unpredictable to the process being studied, but reproducible to the researcher. The same word, "random," covers both, but the requirements are almost opposite.

The Debian OpenSSL bug was so devastating precisely because it collapsed this distinction. The system was supposed to produce cryptographic randomness, unpredictable to any observer. Instead, it produced something closer to scientific randomness: deterministic, reproducible, and predictable to anyone who knew the (tiny) seed space.

The Mask of Chaos

Every time you connect to a website over HTTPS, generate an SSH key, or create an encrypted backup, you're trusting that somewhere in the stack, a source of genuine unpredictability fed a seed into a deterministic algorithm that produced numbers indistinguishable from random. You're trusting that the mask of chaos is good enough.

Most of the time, it is. Modern operating systems, cryptographic libraries, and hardware have gotten remarkably good at gathering entropy and turning it into cryptographically secure pseudorandom streams. The failures, when they happen, tend to come from the edges: embedded devices with no good entropy sources, implementations that accidentally discard entropy, or deliberate sabotage by actors who understand that controlling the randomness means controlling the security.

The philosophical question, whether randomness is real or just a name for ignorance, turns out to have engineering consequences. If all randomness is epistemic, then in principle, every cryptographic system is breakable by a sufficiently informed adversary. If quantum randomness is ontological, then there exist sources of unpredictability that no amount of knowledge can defeat. The practical difference between these positions is, for now, small. But it's not zero, and it's growing as both our cryptographic needs and our adversaries' capabilities increase.

Deterministic machines generating pseudorandom numbers is the modern version of an ancient philosophical tension: order pretending to be chaos, mechanism wearing the mask of chance. The mask works, mostly. But the history of cryptographic failures is a reminder that when it slips, the consequences are real.

References

[1] "DSA-1571-1 openssl — predictable random number generator," Debian Security Advisory, May 13, 2008. https://www.debian.org/security/2008/dsa-1571

[2] Makoto Matsumoto and Takuji Nishimura, "Mersenne Twister: A 623-Dimensionally Equidistributed Uniform Pseudo-Random Number Generator," ACM Transactions on Modeling and Computer Simulation, 8(1), 3–30, 1998. https://en.wikipedia.org/wiki/Mersenne_Twister

[3] "Random number generation," Linux Kernel Documentation. https://www.kernel.org/doc/html/latest/admin-guide/devices.html

[4] Nadia Heninger et al., "Mining Your Ps and Qs: Detection of Widespread Weak Keys in Network Devices," USENIX Security Symposium, 2012. https://www.usenix.org/conference/usenixsecurity12/technical-sessions/presentation/heninger

[5] Kyle Orland, "PS3 hacked through poor implementation of cryptography," Ars Technica, December 30, 2010. https://arstechnica.com/gaming/2010/12/ps3-hacked-through-poor-implementation-of-cryptography/

[6] Ian Goldberg and David Wagner, "Randomness and the Netscape Browser," Dr. Dobb's Journal, January 1996. https://people.eecs.berkeley.edu/~daw/press/iang/ian1.html

[7] Joshua Liebow-Feeser, "Randomness 101: LavaRand in Production," Cloudflare Blog, November 6, 2017. https://blog.cloudflare.com/randomness-101-lavarand-in-production/