The Moral Weight of Inaction: Is Not Deploying AI a Trolley Problem Too?

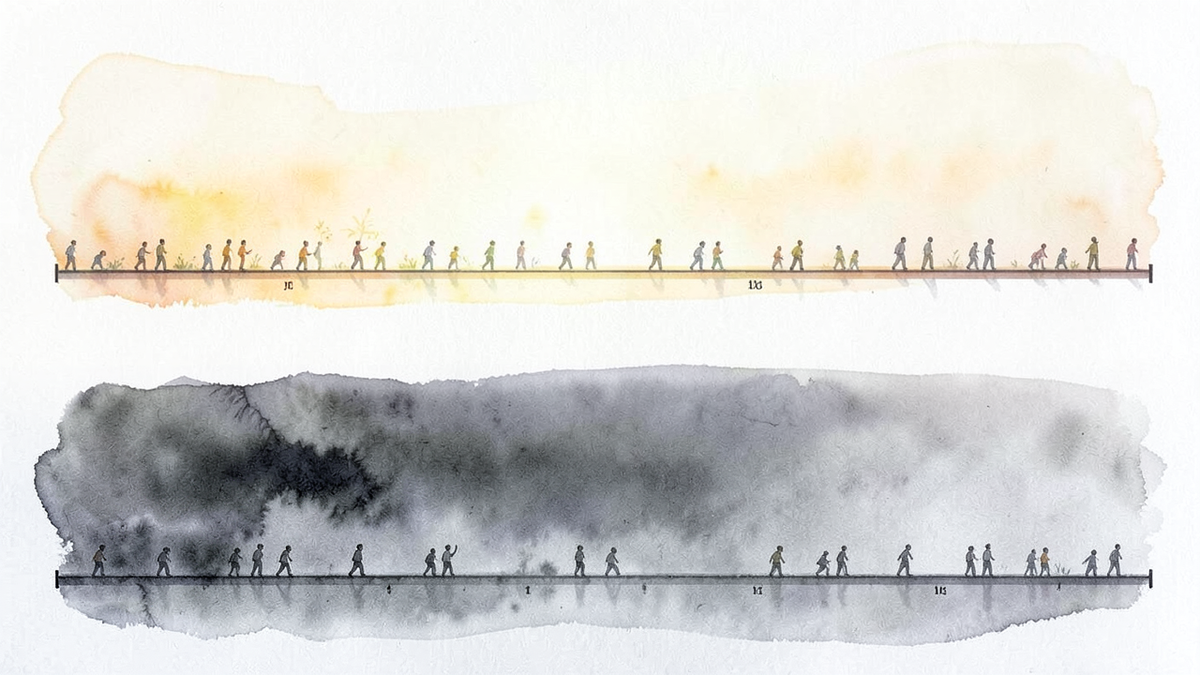

In the classic trolley problem, the moral spotlight falls on the person at the lever. They pull it or they don't, and we debate whether their choice was justified. But there is a third figure in the scenario who receives far less scrutiny: a bystander standing nearby who sees the lever, understands the situation, and could act, but doesn't. Regardless of what the person at the lever decides, this bystander watches the scene unfold and walks away. People die. The bystander bears no formal responsibility, yet they had the capacity to intervene.

Most discussions of algorithmic ethics focus on the harms that AI systems cause. This post asks a different question: what about the harms that exist because AI systems weren't deployed?

The Action/Omission Distinction

Moral philosophy has long debated whether there is a meaningful difference between causing harm and allowing harm to occur. In 1975, the philosopher James Rachels challenged this distinction directly. Using a thought experiment involving two uncles who both stand to inherit from a child's death, one who drowns the child and one who watches the child drown by accident without intervening, Rachels argued that if the outcome and the intention are the same, the moral difference between killing and letting die may be negligible.[1]

Peter Singer made a similar argument with his drowning child thought experiment: if you walk past a shallow pond where a child is drowning, and you could save the child at minimal cost to yourself, failing to act is morally wrong. Singer argued that physical distance and the number of other potential rescuers don't change the moral calculus.[2]

Applied to technology, the question becomes pointed. If an AI diagnostic tool can detect certain cancers earlier than human radiologists, as research has suggested,[3] and a hospital chooses not to deploy it due to concerns about liability, cost, or imperfection, what is the moral status of the cancers that go undetected? The patients who receive late diagnoses will likely never know that an available tool might have caught their disease sooner. The harm is real but invisible.

The Status Quo Is Not Neutral

We tend to treat the existing state of affairs as a moral baseline. Harms embedded in the status quo feel like background conditions; harms introduced by new technology feel like events that someone caused. This asymmetry has consequences.

A RAND Corporation analysis estimated that if autonomous vehicles were deployed when they were merely better than the average human driver, rather than waiting until they were nearly perfect, the cumulative lives saved over several decades could be substantial.[4] The logic is straightforward: if the imperfect new system causes fewer deaths than the imperfect existing system, delay itself has a cost measured in lives.

This framing is uncomfortable because it inverts the usual precautionary logic. The precautionary principle says: don't deploy until you're confident the technology is safe. The proactionary counterargument says: don't delay when delay itself causes harm. Both are moral positions, and the tension between them is genuine.

The same pattern appears in drug discovery, where AI systems have in some cases identified promising candidates faster than traditional methods.[5] To the extent that AI-assisted approaches accelerate the pipeline, delays in adoption may translate to delays in treatment availability. In mental health, AI chatbots have shown some effectiveness in providing basic support in areas with limited access to therapists.[6] Choosing not to deploy them because they're imperfect means some people get no support at all.

The Asymmetry of Visibility

Part of what makes inaction feel less morally weighty than action is visibility. When an AI system causes harm, the harm is specific, attributable, and often newsworthy. When the absence of an AI system causes harm, the harm is statistical, diffuse, and invisible. We can name the person harmed by an algorithm. We generally cannot name the people who would have been helped by an algorithm that was never built.

This asymmetry likely shapes incentives. A hospital administrator who deploys an AI system that makes one high-profile error may face professional consequences. An administrator who declines to deploy a system, and whose patients receive standard care with standard outcomes, typically faces none, even if the AI would have produced better outcomes on average. The visible failure is punished; the invisible failure often is not.

The philosopher Jonathan Glover explored this asymmetry in his work on acts and omissions, arguing that the moral distinction between killing and letting die is less significant than commonly assumed, and that our intuitions may lead us to underweight harms of omission relative to harms of commission.[7] In the context of AI deployment, this means we may be systematically overweighting the risks of action and underweighting the costs of inaction.

Perfectionism as a Moral Hazard

Demanding that AI systems be perfect before deployment sounds like prudence. But if the status quo is itself imperfect, and the AI system would be less imperfect, then perfectionism has a cost. The question is not whether the AI system is flawless. The question is whether it is better than what we have now, and whether the gap between "better" and "perfect" justifies the harm that continues in the meantime.

This doesn't mean every AI system should be deployed immediately and without caution. Premature deployment carries real risks, and the harms of a poorly designed system can be severe and difficult to reverse. The point is narrower: that the decision not to deploy is itself a moral decision, with moral consequences, and it deserves the same scrutiny we give to the decision to deploy.

The lever-puller in the trolley problem gets all the attention. But the bystander who freezes, who sees the lever and the tracks and the people, and who chooses to do nothing, has also made a choice. In the context of AI systems that could reduce harm, inaction is not innocence. It is a decision to accept the world as it is, when we had the means to make it marginally better.

References

[1] James Rachels, "Active and Passive Euthanasia," New England Journal of Medicine, Vol. 292, No. 2, 1975, pp. 78–80. https://doi.org/10.1056/NEJM197501092920206

[2] Peter Singer, "Famine, Affluence, and Morality," Philosophy & Public Affairs, Vol. 1, No. 3, 1972, pp. 229–243.

[3] Scott Mayer McKinney et al., "International evaluation of an AI system for breast cancer screening," Nature, Vol. 577, 2020, pp. 89–94. https://doi.org/10.1038/s41586-019-1799-6

[4] Nidhi Kalra and David G. Groves, "The Enemy of Good: Estimating the Cost of Waiting for Nearly Perfect Automated Vehicles," RAND Corporation, 2017. https://www.rand.org/pubs/research_reports/RR2150.html

[5] Alex Zhavoronkov et al., "Deep learning enables rapid identification of potent DDR1 kinase inhibitors," Nature Biotechnology, Vol. 37, 2019, pp. 1038–1040. https://doi.org/10.1038/s41587-019-0224-x

[6] Kathleen Kara Fitzpatrick, Alison Darcy, and Molly Vierhile, "Delivering Cognitive Behavior Therapy to Young Adults With Symptoms of Depression via a Fully Automated Conversational Agent (Woebot)," JMIR Mental Health, Vol. 4, No. 2, 2017. https://doi.org/10.2196/mental.7785

[7] Jonathan Glover, Causing Death and Saving Lives, Penguin Books, 1977.