Maps, Models, and Digital Twins: When the Representation Becomes More Real Than Reality

In 1946, Jorge Luis Borges wrote a one-paragraph story about an empire whose cartographers created a map so detailed it was the same size as the territory it represented.[1] The map was, of course, useless. It offered no simplification, no abstraction, no advantage over simply looking at the territory itself. Later generations let it decay, and fragments of it could be found in the desert, sheltering animals.

The story was a joke about the absurdity of perfect representation. But seventy years later, we're building something Borges might not have found funny at all: digital models so detailed and so trusted that they've begun to replace the reality they were meant to represent. The map hasn't become the territory. It's become more authoritative than the territory.

When the Map Overrides the Territory

GPS navigation is the most familiar example. Satellite navigation systems provide turn-by-turn directions based on a digital model of the road network. The model is usually accurate. When it isn't, the results can be striking.

Reports of drivers following GPS directions into bodies of water, down closed roads, or off incomplete bridges surface regularly enough to constitute a recognizable pattern.[2] These aren't cases of people being foolish. They're cases of people trusting the model more than their direct perception of reality. The screen says turn right. The eyes say there's a lake. The screen wins, at least often enough to generate a steady stream of news stories.

This is Plato's Cave inverted. In the original allegory, the prisoners can't see reality because they're chained facing the wall. GPS users can see reality perfectly well. They choose the model anyway. The representation has become more authoritative than direct observation.

The pattern extends beyond individual navigation. Mapping platforms determine which businesses get foot traffic by controlling how they appear in search results. A restaurant that doesn't show up on the map might as well not exist for a significant portion of potential customers. The map doesn't just represent the territory; it shapes it. Businesses optimize for map visibility the way websites optimize for search rankings. The representation influences the reality it claims to merely describe.[3]

Traffic routing applications take this further. When a routing algorithm directs thousands of drivers through a residential neighborhood to avoid highway congestion, the algorithm's model of optimal routing reshapes the physical reality of that neighborhood: more traffic, more noise, more wear on roads not designed for through traffic. Residents have reported that algorithmic routing transformed quiet streets into de facto highways.[4] The model doesn't just represent traffic patterns. It creates them.

Financial Models and the 2008 Crisis

Finance offers perhaps the most consequential example of models overriding reality. In the years leading up to the 2008 financial crisis, the mortgage-backed securities market relied heavily on mathematical models to assess risk. The models, particularly those based on the Gaussian copula function popularized by David X. Li, provided precise-looking estimates of the probability that large numbers of mortgages would default simultaneously.[5]

The models said the risk was low. Traders, rating agencies, and regulators trusted the models. The underlying reality, that housing prices could decline nationally and that mortgage defaults were more correlated than the models assumed, was visible to anyone who looked directly at the data. But the model was more convenient, more precise-looking, and more compatible with the institutional incentive to keep lending.

When reality diverged from the model, the consequences were catastrophic. The financial crisis that followed destroyed trillions of dollars in wealth and triggered a global recession. The models hadn't been wrong in some minor, technical sense. They had fundamentally misrepresented the relationship between their inputs and reality. But because the models produced specific numbers with decimal places, they felt more authoritative than the messy, uncertain, qualitative assessments that might have captured the actual risk.

This is the shadow becoming more trusted than the object. The model (a mathematical representation of mortgage risk) was treated as more real than the mortgages themselves. When the model and reality disagreed, institutions chose the model.

Digital Twins and the Source of Truth

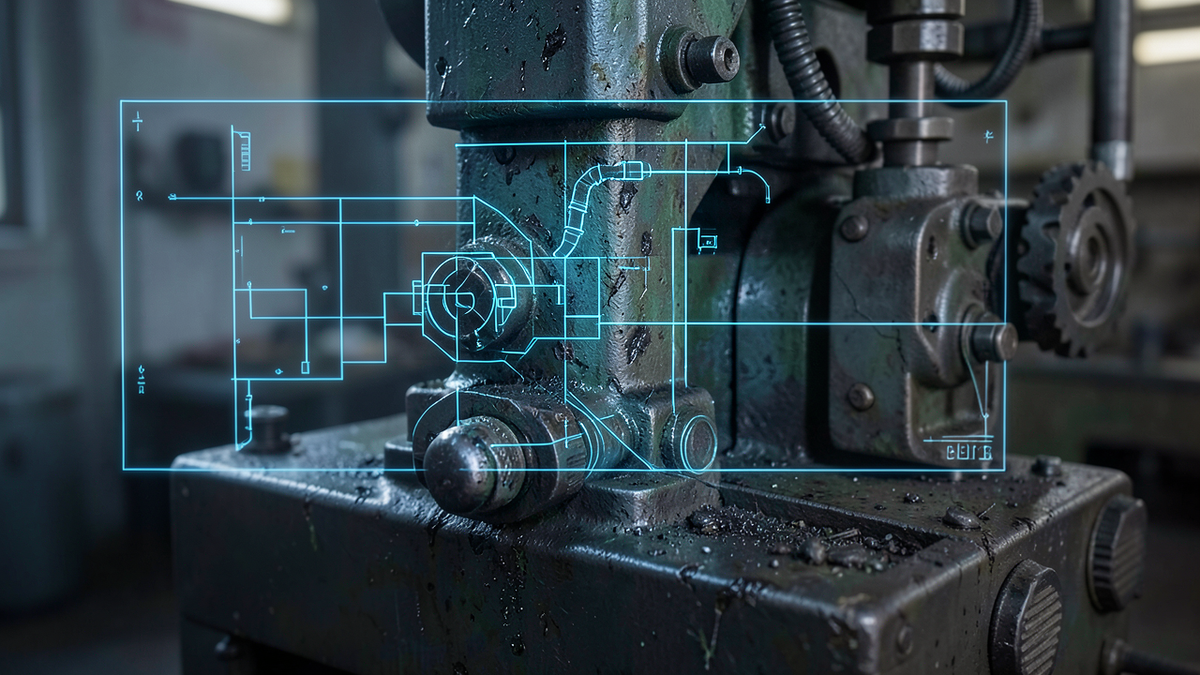

The concept of the digital twin takes the map-territory relationship to its logical extreme. A digital twin is a detailed virtual replica of a physical system: a factory, a building, a city, a human body. Sensors on the physical system feed data to the digital model, which simulates the system's behavior in real time.

Digital twins are genuinely useful. They allow engineers to test changes virtually before implementing them physically. They can predict maintenance needs, optimize performance, and simulate scenarios that would be dangerous or expensive to test in reality. Manufacturing companies report significant efficiency gains from digital twin implementations.[6]

The philosophical problem emerges when the digital twin becomes the primary reference point. When the model and the physical system disagree, which one do you trust? In practice, organizations increasingly trust the model. If the digital twin says a machine needs maintenance but the machine appears to be running fine, the maintenance gets scheduled. If the model says a process should be optimized a certain way, the physical process gets adjusted to match.

This isn't inherently wrong. The model often captures patterns that human observation misses. But it represents a subtle inversion: reality is being adjusted to match the model, rather than the model being adjusted to match reality. The shadow is directing the object.

When Software Overrides Perception

The most sobering example of models overriding reality involves safety-critical systems. The Boeing 737 MAX crashes of 2018 and 2019 involved a software system called MCAS (Maneuvering Characteristics Augmentation System) that relied on a single angle-of-attack sensor to determine the aircraft's pitch. When that sensor provided faulty data, the software model "believed" the plane was pitching up dangerously and repeatedly pushed the nose down. The pilots, who could see and feel that the plane was not pitching up, fought the software. The software won.[7]

This is the map-territory problem with fatal consequences. The software model of the aircraft's state (based on sensor data) overrode the pilots' direct perception of reality. The model was wrong. The pilots were right. But the system was designed to trust the model.

Medical imaging AI presents a less dramatic but philosophically similar challenge. As AI systems become more accurate at reading medical images, radiologists increasingly defer to algorithmic assessments. Studies have shown that when AI and human readers disagree, the tendency is shifting toward trusting the AI, even in cases where the human assessment was correct.[8] The model's confidence can override the expert's judgment.

The Paradox of Useful Models

The statistician George Box famously observed that "all models are wrong, but some are useful."[9] This captures the essential tension. Models are simplifications of reality. That's what makes them valuable. A map that included every blade of grass would be as useless as Borges' imperial map. The power of a model lies precisely in what it leaves out.

The problem isn't that models are imperfect. It's that we forget they're imperfect. The GPS is useful because it simplifies navigation. It becomes dangerous when we trust it more than our eyes. The financial model is useful because it quantifies risk. It becomes dangerous when we trust it more than the underlying economic reality. The digital twin is useful because it simulates complex systems. It becomes dangerous when we adjust reality to match the simulation rather than the other way around.

Each of these is a cave wall. The model shows us a simplified, clean, precise representation of something messy and complex. The representation is easier to work with than reality. And gradually, imperceptibly, the representation becomes the thing we manage, optimize, and trust, while reality recedes into the background.

A few principles help maintain the right relationship between models and reality:

Remember what the model leaves out. Every model is a simplification. Knowing what's been simplified away is as important as knowing what's been included. The GPS doesn't know about the construction that started yesterday. The financial model doesn't capture panic. The digital twin doesn't feel vibrations the sensors don't measure.

When the model and reality disagree, investigate. Don't automatically trust either one. The disagreement itself is information. Sometimes the model has detected something real that observation missed. Sometimes reality has changed in ways the model hasn't captured. The answer requires looking at both.

Maintain direct observation. Models should augment perception, not replace it. The pilot who trusts instruments over sensation in a cloud is making a good decision. The pilot whose software overrides correct perception is in a badly designed system. The difference is whether the model supplements human judgment or supplants it.

Borges' cartographers built a map the size of the territory and discovered it was worthless. We're building models that are smaller than reality but more trusted than reality. The map is useful precisely because it's not the territory. The moment we forget that distinction, we're back in the cave, watching shadows and calling them the world.

References

[1] Jorge Luis Borges, "On Exactitude in Science," Collected Fictions, translated by Andrew Hurley, Penguin, 1998. Originally published 1946.

[2] Greg Milner, Pinpoint: How GPS Is Changing Technology, Culture, and Our Minds, W.W. Norton, 2016.

[3] Alexis C. Madrigal, "How Google Builds Its Maps — and What It Means for the Future of Everything," The Atlantic, September 6, 2012. https://www.theatlantic.com/technology/archive/2012/09/how-google-builds-its-maps-and-what-it-means-for-the-future-of-everything/261913/

[4] Jay Bennett, "How Neighborhoods Are Fighting Off Traffic That Waze Sends Their Way," Popular Mechanics, June 6, 2016. https://www.popularmechanics.com/home/a21212/how-homeowners-fighting-waze/

[5] Felix Salmon, "Recipe for Disaster: The Formula That Killed Wall Street," Wired, February 23, 2009. https://www.wired.com/2009/02/wp-quant/

[6] Michael Grieves and John Vickers, "Digital Twin: Mitigating Unpredictable, Undesirable Emergent Behavior in Complex Systems," in Transdisciplinary Perspectives on Complex Systems, Springer, 2017. https://doi.org/10.1007/978-3-319-38756-7_4

[7] "Ethiopian Airlines Flight 302," Wikipedia, accessed March 2026. https://en.wikipedia.org/wiki/Ethiopian_Airlines_Flight_302

[8] Thomas W. Loehfelm and Peter Kuo, "Artificial Intelligence and Machine Learning in Radiology: Opportunities, Challenges, Pitfalls, and Criteria for Success," Journal of the American College of Radiology, Vol. 14, No. 12, 2017. https://doi.org/10.1016/j.jacr.2017.06.001

[9] George E.P. Box, "Robustness in the Strategy of Scientific Model Building," Robustness in Statistics, Academic Press, 1979.