The Lottery Problem: When Is Random Actually Fair?

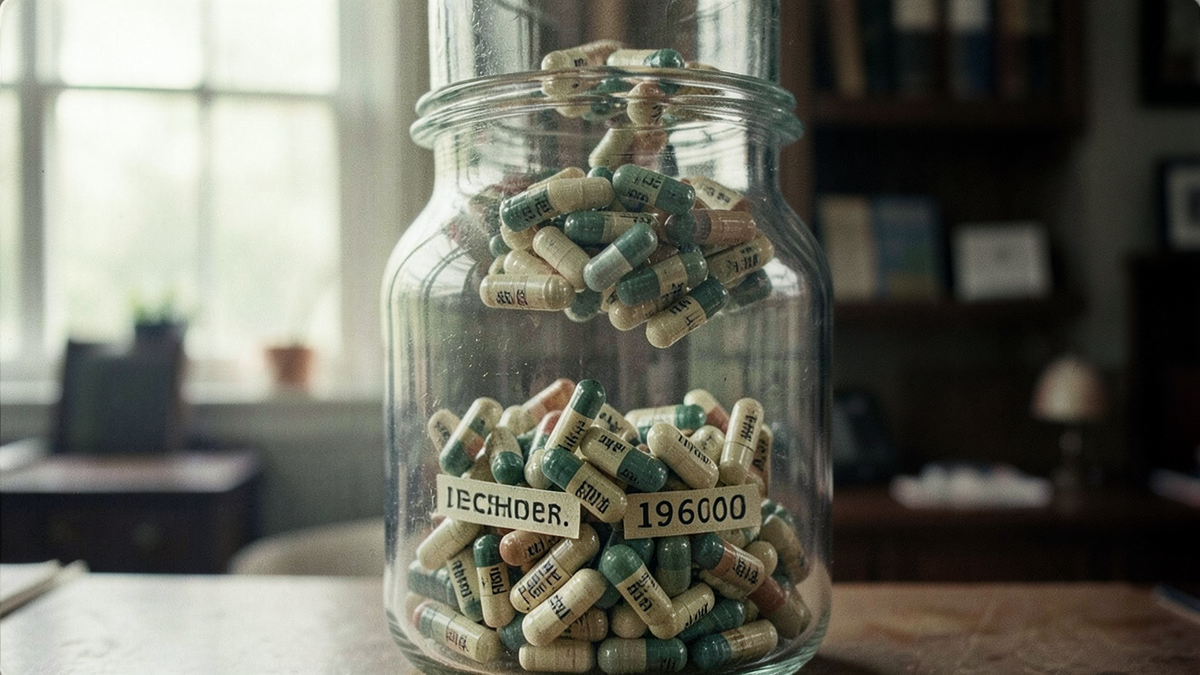

On December 1, 1969, the United States held its first draft lottery since World War II. The Selective Service System placed 366 capsules, one for each possible birthday including February 29, into a large glass container. The capsules were drawn one at a time, and the order determined which young men would be called to serve in Vietnam. It was supposed to be the fairest possible method: pure chance, no human bias, no favoritism.

Within weeks, statisticians noticed a problem. Men born in the last months of the year were significantly more likely to be drafted than those born in January or February. According to reports, the capsules had been placed into the container month by month, January first and December last, and then insufficiently mixed. The December capsules, sitting near the top, were drawn disproportionately early.[1]

The randomness wasn't random. And because it wasn't random, it wasn't fair.

The Appeal of Chance

The idea of using randomness to make fair decisions is ancient. The Athenians selected most public officials by lot using a device called the kleroterion, a stone slab with slots and a tube that released black and white balls randomly. They believed that elections favored the wealthy and well-connected, while sortition, selection by lot, gave every citizen an equal chance.[2]

The logic is intuitive: if nobody chooses, nobody can be biased. A coin doesn't care about your race, your wealth, or your connections. A lottery ball doesn't know whose name is printed on it. Randomness seems to offer a kind of procedural purity that human judgment can't match.

This intuition drives the use of randomized selection across modern institutions. Jury pools are typically drawn randomly from voter rolls and driver's license records. School choice programs in some cities use lottery systems when demand exceeds capacity. Clinical trials randomly assign patients to treatment and control groups to eliminate selection bias.

In each case, the appeal is the same: randomness as a shield against human bias. But the shield only works if the randomness is genuine, and if the pool it draws from is itself fair.

Random From a Biased Pool

The 1969 draft lottery illustrates a principle that applies far beyond military conscription: randomness inherits the properties of its inputs. A perfectly random draw from a biased pool produces biased outcomes.

Consider jury selection. In the United States, jury pools are typically drawn from voter registration lists and Department of Motor Vehicles records. This sounds neutral, but research has suggested that these lists may systematically underrepresent certain demographics. People who don't register to vote or don't have driver's licenses, groups that tend to skew younger, lower-income, and disproportionately minority, may be less likely to appear in the pool.[3] The random selection from the pool is fair. The pool itself may not be.

The same pattern appears in algorithmic systems. A content moderation platform that randomly audits user posts for policy violations is being procedurally fair: every post has an equal chance of review. But if the platform's content policies disproportionately flag certain types of speech, or if the user base is skewed in ways that correlate with demographics, the outcomes of that random audit may not be equitable.

This is the fundamental tension: procedural fairness (every element has an equal chance) and outcome fairness (the results are equitable across groups) are not the same thing. Randomness can guarantee the first without delivering the second.

When Random Doesn't Feel Random

In 2014, Spotify's engineering team faced a complaint that would have amused any philosopher of probability. Users were reporting that the shuffle feature wasn't random. They kept hearing songs by the same artist back to back, or the same track appearing suspiciously often.

The shuffle was, in fact, random. That was the problem. True randomness produces clusters. If you flip a fair coin 100 times, you'll almost certainly get runs of five or six heads in a row. That's not a sign of bias; it's a mathematical property of random sequences. But human perception expects randomness to look evenly distributed, with no clusters, no repeats, no patterns. We expect randomness to look like what it isn't.

According to Spotify's engineering team, they responded by making the shuffle less random. They implemented an algorithm that deliberately spaces out songs by the same artist and avoids recent repeats, producing a sequence that feels more random to human listeners precisely because it's more structured.[4]

This gap between statistical randomness and perceived randomness has implications beyond music. In clinical trials, researchers sometimes use stratified randomization rather than simple randomization to ensure that treatment and control groups are balanced on key variables. Simple randomization might, by chance, put most of the older patients in one group and most of the younger patients in another. The result would be statistically valid but potentially misleading. Stratified randomization constrains the randomness to produce more balanced groups, sacrificing pure chance for practical fairness.

The philosophical question is whether this is still "random" in any meaningful sense. If you constrain randomness to produce the outcomes you want, you're making choices about what fairness looks like. The randomness becomes a tool in service of a value judgment, not a replacement for one.

Sortition and the Lottery of Power

The most radical application of randomness to fairness is sortition: selecting political representatives by lot rather than by election. Ancient Athens practiced this for most government positions, and a growing movement of political theorists argues that modern democracies should consider it as well.

The argument is straightforward. Elections, critics suggest, tend to favor candidates who are wealthy, charismatic, well-connected, or skilled at campaigning, none of which necessarily correlate with good governance. A randomly selected legislature would be demographically representative in a way that elected bodies rarely are: proportional representation of women, minorities, income levels, and occupations would emerge naturally from a sufficiently large random sample.[5]

Several countries have experimented with sortition in limited contexts. Ireland's Citizens' Assembly, which included randomly selected citizens, is often cited as having contributed to the process that led to the 2018 referendum on abortion rights. France's Convention Citoyenne pour le Climat reportedly brought together 150 randomly selected citizens to propose climate policy. Proponents of these experiments have generally described them as thoughtful and representative, though their recommendations don't always survive the legislative process.

The philosophical tension is between competence and representation. Elections are, in theory, a filter for competence: voters choose candidates they believe will govern well. Sortition removes that filter, trusting that a representative sample of the population will, collectively, make reasonable decisions. It's a bet that the wisdom of a random crowd outweighs the expertise of a self-selected elite.

A/B Testing and the Ethics of Random Assignment

Large technology companies reportedly run thousands of A/B tests at any given time, randomly assigning users to different versions of a product to measure which performs better. Change the color of a button, the wording of a notification, the ranking of search results, and see which version produces more clicks, more purchases, more engagement.

The randomization is essential: without it, you can't distinguish the effect of the change from pre-existing differences between user groups. But the ethics of random assignment in commercial contexts are murkier than in clinical trials.

In 2014, researchers published a study in the Proceedings of the National Academy of Sciences describing an experiment conducted on a major social media platform. According to the paper, the platform had manipulated the emotional content of roughly 700,000 users' news feeds, showing some users more positive posts and others more negative posts, to test whether emotional states were contagious through social networks. The study reported that they were. The publication prompted widespread criticism: commentators argued that users hadn't meaningfully consented to being subjects in a psychological experiment, and the random assignment meant that some people had been deliberately shown more negative content.[6]

The randomization made the experiment scientifically valid. It didn't make it ethical. Random assignment distributes the burden of experimentation equally, but it doesn't address whether the experimentation should happen at all.

This tension scales. When a platform A/B tests a change to its recommendation algorithm, some users randomly receive a version that may be worse for them: less relevant results, more addictive design patterns, more extreme content. The randomization ensures that the test is fair in the procedural sense. Whether it's fair in the moral sense depends on what's being tested and what the stakes are.

The Limits of Chance

Randomness is a powerful tool for fairness, but it's not a substitute for it. The 1969 draft lottery was random in intent but biased in execution. Jury pools are randomly drawn from lists that may not represent the population. Spotify's shuffle was statistically random but perceptually unfair. A/B tests are procedurally random but can be ethically questionable.

The common thread is that randomness operates within a system, and the fairness of the outcome depends on the fairness of the system. A random draw from a biased pool is still biased. A random assignment to an unethical experiment is still unethical. Randomness can remove certain kinds of bias, the kind that comes from individual human judgment, but it can't remove structural bias, the kind that's built into the inputs.

The Athenians may have sensed this tension, at least partially. Their lottery was open only to citizens, a category that, according to historians, excluded women, slaves, and foreigners.[2] The randomness was fair among those who were eligible. The eligibility criteria were not.

The question for anyone designing a system that uses randomness for fairness is not just "is the selection random?" but "is the pool representative?" and "are the stakes appropriate?" and "who decided what counts as fair?" Randomness can answer the first question. It can't answer the others. Those require human judgment, the very thing randomness was supposed to replace.

References

[1] "Almanac: The 1969 draft lottery," CBS News, December 1, 2019. https://www.cbsnews.com/news/almanac-the-1969-draft-lottery-vietnam-war/

[2] Mogens Herman Hansen, The Athenian Democracy in the Age of Demosthenes, University of Oklahoma Press, 1999. https://www.amazon.com/Athenian-Democracy-Age-Demosthenes-Structure/dp/0806131438

[3] "No Records, No Right: Discovery & the Fair Cross-Section Guarantee," Iowa Law Review, 101 Iowa L. Rev. 1719, 2016. https://ilr.law.uiowa.edu/print/volume-101-issue-5/no-records-no-right-discovery-and-the-fair-cross-section-guarantee

[4] Lukáš Poláček, "How to Shuffle Songs," Spotify Engineering Blog, February 28, 2014. https://web.archive.org/web/20250110171606/https://engineering.atspotify.com/2014/02/how-to-shuffle-songs/

[5] Alexander Guerrero, "Against Elections: The Lottocratic Alternative," Philosophy & Public Affairs, 42(2), 135–178, 2014. https://en.wikipedia.org/wiki/Sortition

[6] Adam D. I. Kramer, Jamie E. Guillory, and Jeffrey T. Hancock, "Experimental evidence of massive-scale emotional contagion through social networks," Proceedings of the National Academy of Sciences, 111(24), 8788–8790, 2014. https://en.wikipedia.org/wiki/Emotional_contagion#Social_networking