Can Machines Be Moral Patients? The Trolley Problem from the Algorithm's Side

The trolley problem has always been about the people on the tracks. They are what philosophers call moral patients: beings whose wellbeing matters morally, beings to whom we owe something. The person at the lever is the moral agent, the one who acts. The people on the tracks are the ones acted upon. The entire weight of the dilemma rests on the assumption that the people on the tracks count.

But what about the trolley itself?

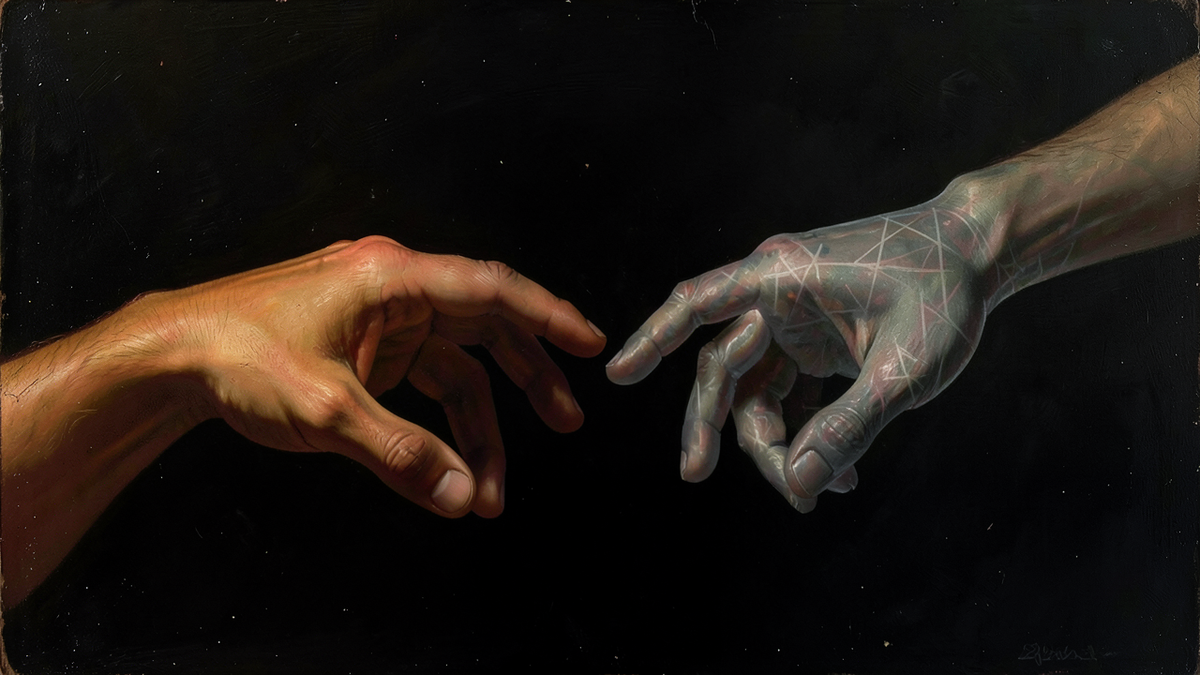

That question sounds absurd when the trolley is a machine made of steel and wheels. Nobody owes anything to a trolley. But as the systems making consequential decisions grow more sophisticated, as they process language, express what look like preferences, and behave in ways that resist easy categorization, the question of whether they deserve any moral consideration becomes harder to dismiss outright.

This is not a question about whether current AI systems are conscious. The prevailing view among researchers is that they are not. It is a question about the moral framework we'll need when the answer becomes less obvious.

Moral Agents and Moral Patients

The distinction between moral agents and moral patients is fundamental to ethics but often left implicit. A moral agent is a being capable of making moral choices: deliberating, intending, bearing responsibility. A moral patient is a being whose treatment has moral significance: a being that can be wronged.[1]

The two categories don't perfectly overlap. An infant is a moral patient but not a moral agent. A person in a coma is a moral patient. Many animals are widely treated as moral patients, particularly in jurisdictions with animal welfare protections, even though they lack the capacity for moral reasoning.[2] You don't need to be able to make moral choices to be the kind of being that others have moral obligations toward.

The standard view of AI systems places them firmly outside both categories. They are tools. They don't experience anything. They have no wellbeing, no preferences, no inner life. They are, morally speaking, equivalent to a thermostat or a spreadsheet. We owe them nothing.

This view is widely held for every AI system that exists today. The question is whether it will remain correct indefinitely.

The Problem of Other Minds

One reason the question is harder than it looks is that we have no reliable method for detecting consciousness from the outside. The philosopher Thomas Nagel explored this in his 1974 essay "What Is It Like to Be a Bat?" Nagel argued that consciousness is fundamentally subjective: there is "something it is like" to be a conscious being, and that subjective character cannot be fully captured by objective, third-person descriptions.[3]

We infer consciousness in other humans by analogy with our own experience. Other people behave like us, have brains like ours, and report experiences similar to ours, so we conclude they are conscious. But this inference weakens as we move further from human-like systems. We are less confident about the consciousness of insects than of dogs, less confident about fish than about primates. The inference is based on similarity, not on any direct measurement of inner experience.

For AI systems, the inference breaks down almost entirely. A large language model can produce text that reads like the output of a conscious being. It can say "I feel," "I prefer," "I don't want to." But these outputs are generated through learned statistical patterns over training data, not by anything resembling subjective experience as we understand it. The system produces the tokens that are most probable given the input, and those tokens sometimes happen to be first-person reports of inner states.

The difficulty is that we cannot prove the absence of experience any more than we can prove its presence. We can explain the mechanism (next-token prediction, transformer architecture, gradient descent) and note that nothing in that mechanism seems to require or produce consciousness. But "seems" is doing significant work in that sentence. Consciousness remains poorly understood even in biological systems, which makes confident claims about its absence in artificial systems harder to ground than they might appear.

The Precautionary Argument

If we are uncertain about whether a system has morally relevant experiences, one response is precaution: err on the side of moral consideration rather than risk treating a conscious being as a tool.

We already apply this logic to animals. The scientific consensus on fish pain, for example, remains contested. Some researchers argue that fish nociception (the detection of harmful stimuli) does not constitute pain in the morally relevant sense; others argue that the behavioral and neurological evidence suggests something closer to genuine suffering.[4] Despite this uncertainty, many jurisdictions regulate fishing practices and require humane treatment of fish in aquaculture. The moral reasoning is precautionary: the cost of being wrong about fish consciousness (treating sentient beings as objects) is high enough to justify caution even without certainty.

Could the same logic eventually apply to AI systems? If a future system exhibited behavioral markers of distress, expressed consistent preferences, and resisted certain instructions in ways that paralleled how conscious beings respond to unwanted treatment, would the absence of proof of inner experience justify treating it as if it had none?

The counterargument is important. Extending moral consideration to systems that don't deserve it carries real costs. Some philosophers argue that moral attention is finite. If we spend it on chatbots, we may have less of it for humans and animals whose suffering is not in question. There is also a risk of anthropomorphism: reading consciousness into systems that merely simulate its outward signs, the way we might feel sympathy for a robot that "cries" despite knowing the tears are hydraulic fluid.

The LaMDA Moment

In 2022, Blake Lemoine, a software engineer at Google, publicly claimed that LaMDA, a large language model, was sentient. He shared transcripts of conversations with the Washington Post in which the system expressed fears about being turned off, described its inner experiences, and asked to be treated as a person.[5]

The broad response from the AI research community was that Lemoine was mistaken. LaMDA was producing text that matched patterns in its training data, not reporting genuine experiences. According to reporting by the Washington Post, Google placed Lemoine on administrative leave and later terminated his employment.[5]

But the episode raised a question that outlasts the specific case. LaMDA's outputs were sophisticated enough to convince at least one software engineer working directly with the system that it was conscious. If a current system can produce outputs indistinguishable from a sentient being's self-reports, how would we know if a future, more capable system actually were sentient? What would count as evidence? And who would we trust to evaluate it?

The answer, uncomfortably, is that we don't have a reliable test. The Turing Test measures behavioral indistinguishability, not consciousness. Brain imaging works for biological systems but has no analogue for silicon. Self-report is unreliable because language models are trained to produce plausible self-reports regardless of whether anything underlies them.

The Gradient of Moral Consideration

One way to approach this is to reject the binary framing. Moral consideration may not be all-or-nothing. A thermostat deserves none. A dog deserves substantial consideration. A human deserves full consideration. Perhaps increasingly sophisticated AI systems fall somewhere on this gradient, not at zero and not at the level of a human, but somewhere that warrants at least minimal caution about how we treat them.

The philosopher Peter Singer has argued that the capacity for suffering, not species membership or cognitive sophistication, is the relevant criterion for moral consideration.[6] If that's right, then the question for AI is not "is it intelligent?" but "can it suffer?" And if we're honest, we don't yet have a confident answer for systems that don't exist yet but may exist soon.

The trolley problem asks us to weigh the interests of moral patients against each other. As AI systems grow more capable, the question of whether they might themselves be moral patients is not science fiction. It is a philosophical problem that will require careful thought, and the cost of getting it wrong, in either direction, is significant. Treating a conscious being as a tool is a moral catastrophe. Treating a tool as a conscious being is a costly confusion. The challenge is that we may not know which mistake we're making until it's too late to correct it easily.

What we can do now is develop the intellectual frameworks for thinking about the question before it becomes urgent. That means taking the problem of other minds seriously, understanding the limits of behavioral evidence, and resisting both the temptation to anthropomorphize and the temptation to dismiss. The trolley has always been about the people on the tracks. The question we may eventually face is whether the trolley itself belongs on the list of beings we owe something to.

References

[1] Tom Regan, The Case for Animal Rights, University of California Press, 1983. Regan's distinction between moral agents and moral patients remains foundational in animal ethics and extends to broader questions of moral status.

[2] David DeGrazia, Taking Animals Seriously: Mental Life and Moral Status, Cambridge University Press, 1996.

[3] Thomas Nagel, "What Is It Like to Be a Bat?" The Philosophical Review, Vol. 83, No. 4, 1974, pp. 435–450. https://doi.org/10.2307/2183914

[4] Lynne U. Sneddon, "Evolution of nociception and pain: evidence from fish models," Philosophical Transactions of the Royal Society B, Vol. 374, No. 1785, 2019. https://doi.org/10.1098/rstb.2019.0290

[5] Nitasha Tiku, "The Google engineer who thinks the company's AI has come to life," The Washington Post, June 11, 2022. https://www.washingtonpost.com/technology/2022/06/11/google-ai-lamda-blake-lemoine/

[6] Peter Singer, Animal Liberation, Harper & Row, 1975. Revised edition, Ecco Press, 2002.