Leaving the Cave: How to See Past the Shadows

Around 375 BCE, Plato described a group of prisoners who had spent their entire lives chained in a cave, facing a wall. Behind them, a fire cast shadows of objects carried along a walkway. The prisoners watched the shadows, named them, debated them, and built their entire understanding of reality around them. The shadows were all they knew.[1]

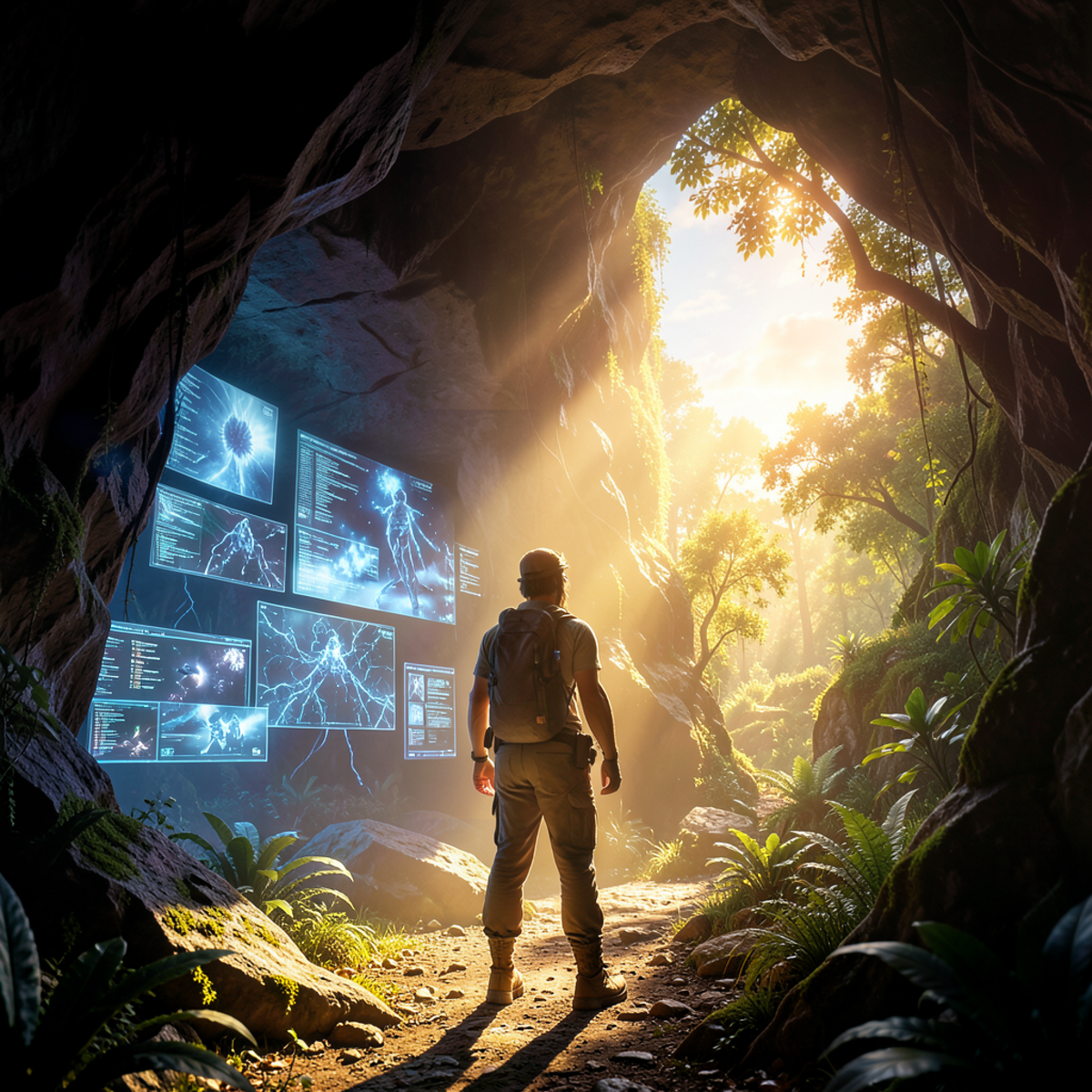

One prisoner was freed. He turned around, saw the fire, and realized the shadows were projections. He climbed out of the cave and saw the sun. The real world was overwhelming, painful to look at, and incomparably richer than the shadows he'd spent his life studying. When he returned to tell the others, they thought he was mad.

This week, we've explored five ways technology creates modern caves: metrics that substitute for the things they measure, abstractions that hide the systems they simplify, social media that replaces lived experience with curated performance, AI that generates shadows from other shadows, and models that become more trusted than the reality they represent. Each case follows the same pattern. A representation stands in for reality. The representation is useful. And gradually, we forget it's a representation at all.

The Pattern

Across all five cases, the same dynamics appear.

Representations replace reality. We interact with the metric, not the outcome. The API, not the system. The feed, not the person. The AI summary, not the source. The model, not the territory. In each case, the representation is easier to work with, more convenient, more precise-looking. That's what makes it useful. That's also what makes it dangerous.

Convenience drives cave-dwelling. Nobody is forced into these caves. We choose them because representations are genuinely easier to manage than reality. A dashboard is faster than walking the factory floor. An abstraction is more productive than understanding every layer. A social media feed is more accessible than in-person connection. An AI summary is quicker than reading five papers. A model is more tractable than direct observation. The cave is comfortable by design.

Optimization targets shadows. When we optimize for the representation instead of the reality, the two diverge. Goodhart's Law in metrics. Reward hacking in AI. Velocity gaming in Agile. Engagement optimization in social media. In each case, the system gets better at hitting the target while the thing the target was supposed to represent drifts further away.

Expertise means seeing the fire. The developer who understands what's beneath the abstraction, the analyst who knows what the metric doesn't capture, the researcher who checks AI output against primary sources, the engineer who validates the model against observation: these are people who have turned around in the cave. They use the shadows, but they know what's casting them.

Plato's Stages of Knowledge

Plato didn't just describe the cave. He described a progression of understanding, four stages that map surprisingly well onto how we relate to technology's representations.[1]

The first stage, eikasia, is imagination or illusion. This is accepting shadows at face value. Trusting the dashboard without questioning what it measures. Using abstractions without wondering what they hide. Believing social media represents reality. Accepting AI output as knowledge. Taking the model as truth. At this stage, the representation and reality are indistinguishable because you've never considered they might differ.

The second stage, pistis, is belief or trust. This is recognizing that shadows are representations of something real, without fully understanding the relationship. You know metrics are proxies. You know abstractions hide complexity. You know social media is curated. You know AI generates patterns rather than knowledge. You know models simplify. But this knowledge is abstract. You haven't yet investigated the gap between representation and reality in any specific case.

The third stage, dianoia, is reasoning or understanding. This is grasping the relationship between the shadow and what casts it. You know which metrics matter and why, and which ones are misleading. You understand what's beneath the abstraction layer you work with. You can identify the gap between someone's online persona and their likely reality. You understand how language models work well enough to predict where they'll fail. You know the assumptions your model makes and where they might break. This is functional expertise: knowing the representation well enough to use it wisely.

The fourth stage, noesis, is true knowledge or direct insight. This is understanding reality itself, not through representations but directly. Measuring what matters rather than what's easy. Understanding systems from hardware to application. Connecting with people authentically rather than through digital mediation. Building knowledge from primary sources rather than summaries. Observing reality directly rather than through models. This stage is rare, difficult, and often uncomfortable. It's also where genuine understanding lives.

Most of us operate between the second and third stages for most things. We know, in principle, that representations aren't reality. We occasionally investigate the gap. We rarely achieve direct understanding across all the domains where we rely on representations.

The Cost of Staying in the Cave

When we mistake representations for reality, the consequences compound.

We optimize for metrics that don't capture what matters, and the things that matter deteriorate while the numbers improve. We build on abstractions we don't understand, and when they leak, we can't diagnose the failure. We compare our unedited lives to others' curated highlights and feel inadequate. We accept AI-generated content as knowledge without verification and build on foundations that may not connect to anything real. We trust models over direct observation and sometimes adjust reality to match the model rather than the other way around.

None of these failures is inevitable. Each is the result of forgetting that the representation isn't the thing itself. The dashboard isn't the business. The code isn't the system. The feed isn't the life. The summary isn't the knowledge. The map isn't the territory.

Leaving the Cave

Plato's freed prisoner didn't destroy the cave. He didn't smash the fire or break the chains of the other prisoners. He simply saw what was outside and understood the relationship between the shadows and reality. That understanding changed everything, even though the shadows remained.

The same applies to technology's caves. The goal isn't to abandon metrics, abstractions, social media, AI, or models. They're useful. Often indispensable. The goal is to maintain awareness of what they are: representations, not reality. Useful simplifications, not complete pictures.

A few practices help sustain that awareness.

Go to the source. Regularly bypass the representation and engage with the thing it represents. Talk to customers, not just their NPS scores. Read the code beneath the abstraction. Meet people in person, not just through their feeds. Check AI output against primary sources. Validate models against direct observation. This is how you calibrate whether the shadows still resemble reality.

Learn one layer down. You don't need to understand everything beneath every representation you use. But understanding the layer directly below your usual level of abstraction gives you the ability to diagnose problems when the representation breaks down. If you manage by dashboard, understand how the metrics are calculated. If you code in a high-level language, understand the runtime. If you use AI tools, understand how they generate output.

Question the representation. Develop the habit of asking what any given representation leaves out. What doesn't the metric capture? What does the abstraction hide? What is the feed not showing you? What might the AI have gotten wrong? Where might the model's assumptions break? These questions don't require deep expertise. They require the awareness that the question is worth asking.

Embrace the discomfort. Plato was explicit about this: leaving the cave is painful. The sunlight blinds at first. Direct reality is messier, more complex, and less comfortable than clean representations. Understanding what's beneath the abstraction takes effort. Authentic connection is more vulnerable than curated performance. Primary knowledge requires more work than AI summaries. The discomfort is the price of understanding.

The Freed Prisoner's Dilemma

Plato's freed prisoner faces a choice: stay in the sunlight alone, or return to the cave where everyone agrees the shadows are real.

This plays out in technology constantly. The engineer who understands the system deeply but can't convince management that the metrics are misleading. The developer who knows the abstraction is hiding a critical flaw but gets told "it works, don't touch it." The person who steps away from social media and loses social connections. The researcher who insists on primary sources when everyone else uses AI summaries. The analyst who questions the model when the model is what everyone trusts.

Seeing past the shadows is valuable but socially costly. The cave is comfortable. The shadows are familiar. And the other prisoners don't want to hear that their reality is incomplete.

The Paradox of Useful Shadows

Here's where Plato's Cave gets complicated in the technology context: the shadows are genuinely useful.

Abstractions enable us to build complex systems. Metrics help us make decisions at scale. Models let us understand things we can't observe directly. Social media connects people across distances. AI processes vast amounts of information. These aren't failures. They're achievements. The problem was never the shadows themselves.

The problem is forgetting they're shadows.

Plato wrote the Allegory of the Cave to illustrate the difference between appearance and reality, between opinion and knowledge. He couldn't have imagined a world where we'd build caves within caves within caves, layers of abstraction, representation, and mediation so deep that reality itself becomes hard to locate.

But that's where we are. We manage by dashboard, build on abstractions we don't fully understand, experience others through curated feeds, learn from AI-generated summaries, and trust models more than our own observations. Each layer is useful. Each layer is also a cave wall.

The shadows on the wall are useful. Just don't mistake them for the sun.

References

[1] Plato, The Republic, Book VII, translated by G.M.A. Grube, revised by C.D.C. Reeve, Hackett Publishing, 1992. Originally composed c. 375 BCE.