Rolling the Dice a Million Times: Monte Carlo Methods and Decisions Under Uncertainty

Sometime around 1946, the mathematician Stanislaw Ulam was recovering from an illness and playing solitaire. He tried to calculate the probability of winning a particular layout using combinatorial methods, the kind of exhaustive counting that mathematicians prefer. The numbers were intractable. There were too many possible arrangements, too many branching paths.

So he tried something different. Instead of calculating the answer, he played the game many times and counted how often he won. The more games he played, the closer his observed win rate converged on the true probability. He didn't need to solve the problem analytically. He just needed to sample it enough times.[1]

Ulam reportedly shared the idea with John von Neumann, who recognized its potential immediately. They were both working at Los Alamos on nuclear weapons research, where they faced problems in neutron diffusion that were similarly intractable by analytical methods. Von Neumann helped formalize the approach and, according to accounts from the period, the method was given the code name "Monte Carlo," after the famous casino in Monaco, because of its reliance on random sampling.[1]

The insight was deceptively simple: when you can't solve a problem exactly, you can often approximate it by throwing random numbers at it. The more numbers you throw, the better the approximation gets.

The Logic of Random Sampling

Monte Carlo methods rest on a mathematical foundation called the law of large numbers. In its simplest form, the law states that as you increase the number of random samples from a distribution, the average of those samples converges on the true expected value. Flip a fair coin ten times and you might get seven heads. Flip it ten thousand times and you'll get very close to fifty percent.

This convergence is what makes Monte Carlo methods work. If you want to know the probability of some event, or the expected value of some quantity, and you can't calculate it directly, you can simulate the process many times with random inputs and measure the outcome. The answer won't be exact, but with enough samples, it will be close, and you can quantify exactly how close with statistical confidence intervals.

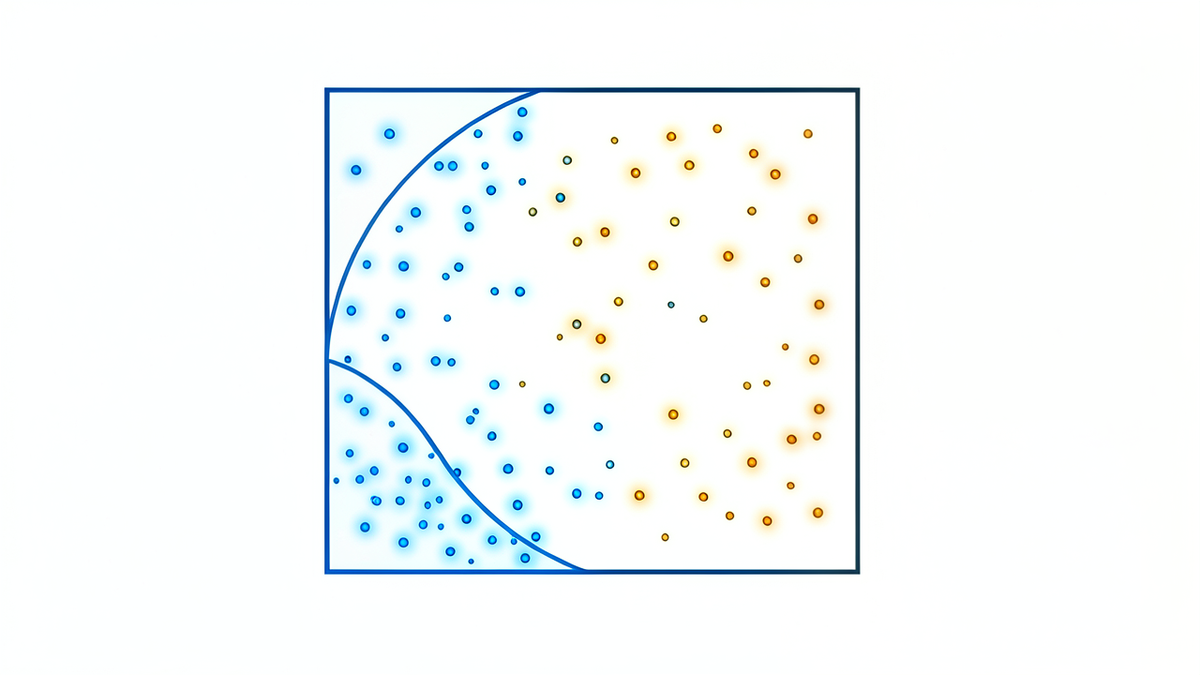

The classic demonstration is estimating the value of pi. Imagine a square with a quarter-circle inscribed inside it. If you randomly scatter points across the square, the fraction that land inside the quarter-circle will approximate pi/4. With a hundred points, the estimate is rough. With a million, it's accurate to several decimal places. The points are random, but the aggregate converges on a precise mathematical constant.

This is the philosophical core of Monte Carlo: randomness, given enough volume, produces reliable knowledge. Individual samples are meaningless. The aggregate is informative. Noise, in sufficient quantity, becomes signal.

The Manhattan Project and Beyond

The original application at Los Alamos involved simulating the behavior of neutrons as they scattered through fissile material. Each neutron's path depends on probabilistic interactions: it might be absorbed, reflected, or pass through, with probabilities determined by the material's properties. Tracking every possible path analytically was impossible. But simulating thousands of individual neutron histories, each following random paths weighted by the known probabilities, produced reliable estimates of how the system would behave.[1]

The method's usefulness extended far beyond nuclear physics. Over the following decades, Monte Carlo simulations were adopted across fields including statistical mechanics, particle physics, and operations research. The approach proved particularly valuable for problems involving high-dimensional spaces, where traditional numerical methods struggle with what's sometimes called the "curse of dimensionality": the number of points needed to cover a space grows exponentially with the number of dimensions, but Monte Carlo sampling doesn't suffer from this scaling problem in the same way.

Finance and the Illusion of Prediction

One of the most consequential applications of Monte Carlo methods is in financial modeling. Banks, hedge funds, and insurance companies use Monte Carlo simulations to estimate the risk of complex financial instruments, stress-test portfolios, and price derivatives.

The basic approach: define a model of how asset prices, interest rates, or default probabilities might evolve over time, then run thousands or millions of random simulations of possible futures. Each simulation follows a different random path. The distribution of outcomes across all simulations gives you an estimate of the range of possible results and their probabilities.

Value at Risk (VaR), a widely used risk metric, often relies on Monte Carlo simulation. A bank might run ten thousand simulated scenarios for its portfolio and report that, in 99% of those scenarios, the loss doesn't exceed a certain threshold. Regulators and risk managers use these numbers to set capital requirements and make decisions about exposure.

But Monte Carlo simulations are only as good as the models they sample from. The 2008 financial crisis exposed this limitation. Many risk models assumed that asset price movements followed normal distributions and that correlations between assets were stable. When the crisis hit, according to analyses published afterward, correlations spiked, tail events that the models treated as near-impossible occurred repeatedly, and the simulated distributions turned out to be poor representations of actual market behavior.[2]

By most accounts, the simulations themselves had been running correctly. The random sampling was sound. The problem, as critics argued afterward, was the model: the assumptions about what the random variables represented and how they related to each other. Monte Carlo can tell you what happens if your model is right. It can't tell you if your model is right.

Game AI and Strategic Exploration

In 2016, a program called AlphaGo, developed by DeepMind, defeated the world champion Go player Lee Sedol. The victory was widely reported as a landmark in artificial intelligence, partly because Go had long been considered too complex for brute-force computation: the number of possible board positions exceeds the number of atoms in the observable universe.[3]

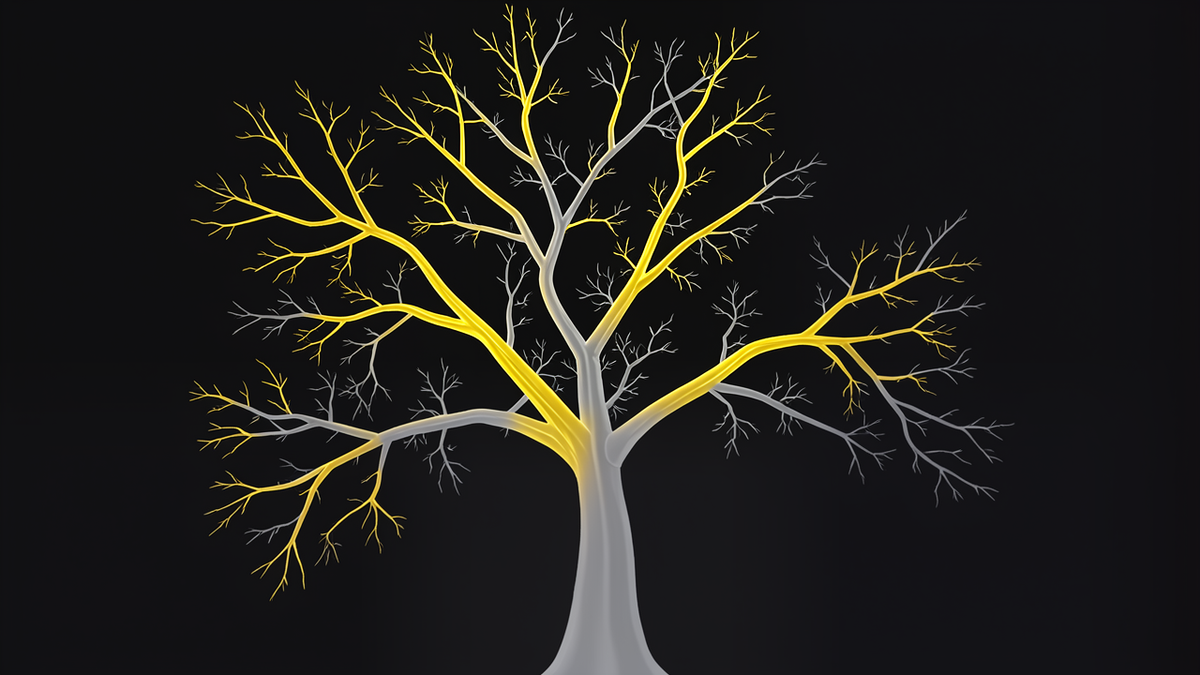

A key component of AlphaGo's approach was Monte Carlo Tree Search (MCTS), a technique that uses random sampling to evaluate positions in a game tree. Instead of trying to analyze every possible sequence of moves, MCTS plays out random games from a given position and uses the results to estimate which moves are most promising. Moves that lead to more wins in random playouts get explored more deeply. Moves that lead to losses get pruned.

The elegance of MCTS is that it doesn't need to understand the game in any deep sense. It just needs to be able to simulate random games and count wins. The strategic intelligence emerges from the statistics of many random samples, not from any explicit encoding of strategy. Later versions of AlphaGo combined MCTS with deep neural networks that improved the quality of the random playouts, but the core principle remained: explore the space randomly, then focus on what works.

This is Monte Carlo's philosophical contribution to AI: you don't always need to understand a problem to navigate it. Sometimes you just need to sample it enough times.

Weather, Climate, and Ensemble Forecasting

Weather forecasting is, at its core, a problem of sensitive dependence on initial conditions. Small measurement errors in temperature, pressure, or humidity can compound over time into wildly different forecasts. This is the same chaos that Edward Lorenz discovered in 1963: deterministic equations that are practically unpredictable because you can never measure the initial conditions precisely enough.

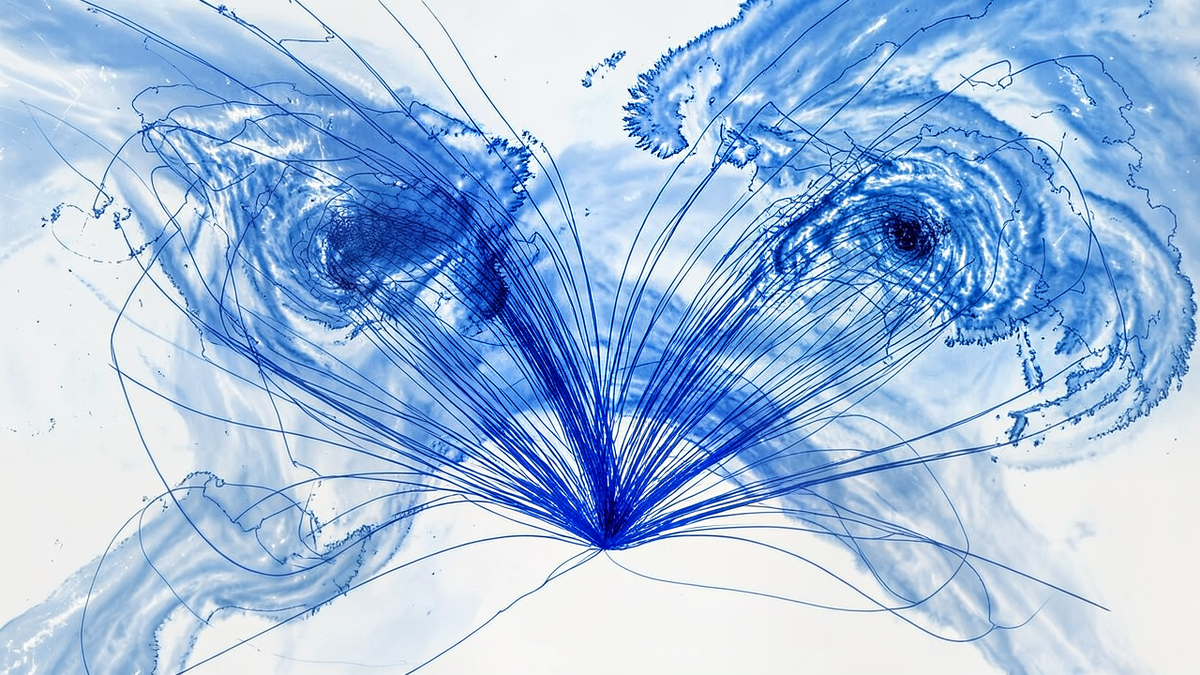

Modern weather services address this through ensemble forecasting, a Monte Carlo approach. Instead of running a single forecast from the best available initial conditions, they run dozens of forecasts, each starting from slightly different initial conditions that reflect the uncertainty in the measurements. The spread of the ensemble tells forecasters how confident they should be: if all runs agree, the forecast is reliable; if they diverge, uncertainty is high.[4]

Climate modeling extends this further. Climate projections typically involve running models under different scenarios of greenhouse gas emissions, economic development, and policy choices. Each scenario is itself run multiple times with different random perturbations to capture the range of natural variability. The result is not a single prediction but a distribution of possible futures, each weighted by the assumptions that produced it.

This is Monte Carlo as epistemic humility. The models don't claim to predict the future. They claim to map the space of plausible futures and estimate how likely each one is, given what we know and what we don't.

The Limits of Simulation

Monte Carlo methods are powerful, but they carry assumptions that are easy to overlook.

The most fundamental is that the random sampling must be representative of the actual distribution. If you're simulating financial risk but your model doesn't account for extreme events, your simulation will underestimate risk. If you're simulating climate but your model doesn't capture certain feedback loops, your projections will miss important scenarios. The randomness is only as good as the model it samples from.

There's also the question of computational cost. Monte Carlo methods converge slowly: to halve the error, you typically need to quadruple the number of samples. For problems with many dimensions or rare events, the number of samples needed can be enormous. Techniques like importance sampling and stratified sampling can help by focusing computational effort on the most informative regions of the space, but they introduce their own assumptions and complexities.

And there's a subtler philosophical issue. Monte Carlo methods produce probabilistic answers: not "this will happen" but "this has a 73% chance of happening." This is honest, but it can be uncomfortable. Decision-makers often want certainty, and the temptation to treat a Monte Carlo estimate as a prediction rather than a distribution is persistent.

The Wisdom of Many Rolls

Monte Carlo methods represent a particular philosophical stance toward uncertainty: instead of trying to eliminate it, you embrace it. You admit that you can't predict the future, can't solve the equation, can't enumerate every possibility. So you sample. You roll the dice a million times and see what patterns emerge.

The approach works because of a deep mathematical truth: randomness, in aggregate, is informative. Individual random samples tell you nothing. But the distribution of many samples tells you a great deal, often more than any single analytical solution could, especially for problems that are too complex for exact methods.

This is the paradox at the heart of Monte Carlo: we use randomness to reduce uncertainty. We throw dice to make better decisions. We embrace what we can't control in order to understand what we can.

Ulam, playing solitaire in his hospital bed, stumbled onto something that would reshape how we model nuclear reactions, price financial instruments, forecast weather, and train artificial intelligence. The insight was that you don't always need to solve a problem to understand it. Sometimes you just need to play it enough times.

References

[1] "Monte Carlo method," Wikipedia. https://en.wikipedia.org/wiki/Monte_Carlo_method

[2] Felix Salmon, "Recipe for Disaster: The Formula That Killed Wall Street," Wired, February 23, 2009. https://www.wired.com/2009/02/wp-quant/

[3] David Silver et al., "Mastering the game of Go with deep neural networks and tree search," Nature, 529, 484–489, 2016. https://www.nature.com/articles/nature16961

[4] "Fact sheet: Ensemble weather forecasting," European Centre for Medium-Range Weather Forecasts (ECMWF), March 23, 2017. https://www.ecmwf.int/en/about/media-centre/news/2017/fact-sheet-ensemble-weather-forecasting